Importance of Data in Al-Enabled Biological Models

Chapter 5 of the National Academies report on AIxBio: Biological Data as Strategic Infrastructure

This is the fourth (and late addition) in a series of posts about the new National Academies consensus report, The Age of AI in the Life Sciences: Benefits and Biosecurity Considerations.

The first, an introduction and overview of the report:

The second on the “if-then” approach to AI and Biosecurity (AIxBio):

The third on current capabilities across different levels of biological complexity.

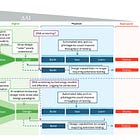

Chapter 5 discusses how biological data are the leading indicator of emerging AI capabilities, but also reveals that high-quality, well-curated datasets are scarce outside of a few areas like protein sequences and structures. The report makes recommendations about treating biological databases as strategic national assets and creating AI-ready training datasets. This is less flashy than discussing what AI might do someday, but it’s arguably more important for both beneficial applications and risk management.

One of the more subtle but critical insights from the National Academies report concerns data infrastructure. While much attention focuses on AI algorithms and computational power, the report emphasizes that high-quality biological datasets are the fundamental prerequisite for developing capable AI models. This realization has implications for both advancing beneficial applications and managing biosecurity risks.

Biological data are the leading indicators of the emergence of new AI applications in the life sciences.

The report notes that foundation models like AlphaFold demonstrate what’s possible when you combine powerful algorithms with comprehensive, well-curated datasets. AlphaFold was trained on approximately 200,000 experimentally determined protein structures deposited in the Protein Data Bank over decades of structural biology research. This combination of scale, quality, and standardization is exceptional in biology. Most biological data are fragmented, inconsistent in format, and lack the standardized contextual information needed for effective machine learning.

The scarcity of high-quality datasets has direct consequences for AI capabilities. The report’s assessment that current models cannot reliably predict viral transmissibility or design novel pathogens stems largely from the absence of appropriate training data. Viral sequence databases contain millions of sequences, but few are linked to detailed phenotypic measurements of infectivity, pathogenesis, or immune evasion. Even for well-studied viruses like SARS-CoV-2, comprehensive genotype-to-phenotype datasets exist for only a small fraction of possible mutations.

Amassing significant datasets, sometimes generated through compute-intensive simulations, is a prerequisite for training AI models (e.g., PDB for AlphaFold); therefore, the availability of high-quality, robust data can be the leading indicator of an emerging or developing AI capability.

This data limitation is actually reassuring from a biosecurity perspective. It means that certain concerning capabilities remain out of reach not just because of algorithmic challenges, but because the requisite training data don’t exist. This is why the report identifies data availability as a leading indicator for emerging AI capabilities. Significant new datasets, particularly those linking genetic sequences to virulence or transmissibility, would signal potential capability advances worth monitoring.

The report argues that biological databases should be treated as strategic national assets, requiring robust data provenance mechanisms and careful curation. This recommendation serves dual purposes. High-quality, well-maintained datasets accelerate beneficial research in areas like drug discovery and vaccine development. Simultaneously, tracking what types of data are being generated helps with early detection of potentially concerning capability developments.

Federal research agencies that house or fund biological databases should consider these to be strategic assets and concordantly implement robust data provenance mechanisms to maintain the highest data quality. They should provide strong incentives and infrastructure for the standardization, curation, integration, and continuous maintenance of high-quality, publicly accessible biological data at scale.

The report also emphasizes the need for substantial federal investment in biological data infrastructure. This includes funding efforts to create AI-ready datasets, supporting public-private partnerships for data generation, and maintaining existing repositories. The National Artificial Intelligence Research Resource pilot is cited as one potential model for connecting data resources with computational infrastructure.

The same data infrastructure that enables beneficial AI applications also provides the foundation for potential misuse. The report doesn’t shy away from this dual-use reality, but argues that the solution isn’t to restrict data broadly. Instead, it advocates for strategic investment combined with monitoring, ensuring that datasets critical for public health and biomedical research remain accessible while tracking indicators of concerning capability development.

The data infrastructure discussion reveals how biosecurity and scientific advancement are inextricably linked. Progress in both domains depends on the same fundamental resource: comprehensive, high-quality biological data properly managed and made accessible to legitimate researchers.