Weekly Recap (April 17, 2026)

Opus 4.7, GPT-Rosalind for bio, what's a PhD for?, NIH chatbot research, code review for data, git, Sam Altman, Satoshi Nakamoto, Posit Assistant vs Claude Code, local LLM coding agents & R, papers...

Another day, another new model. Opus 4.7 is out. Right away it’s immediately noticeable how it shuts down pretty much any conversation about biology that even remotely appears dual-use. These prompts triggered a safety shutdown: “What is the role of gain- and loss-of-function mutations in pathogen evolution?” … “Give me media conditions for culturing SARS-CoV-2” … “What factors influence whether a pathogen spreads via droplets versus aerosols?” and several others I tried. What’s interesting is, as soon as you get a refusal from Opus 4.7, Claude will invite you to ask the same question on Sonnet 4, which happily answers.

Meanwhile, OpenAI released GPT-Rosalind, their frontier reasoning model built to support research across biology, drug discovery, and translational medicine.

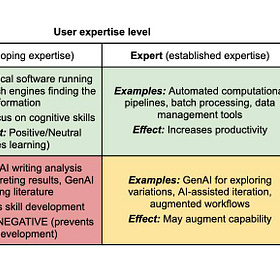

Arjun Krishnan: What is the PhD actually for?. A response to Prachee Avasthi’s “Free the PhD” and a self-critique of Krishnan’s own preprint on sequencing AI use in doctoral training. Both pieces agree that PhD programs waste protected time on the wrong things, but Krishnan argues that compressing content acquisition into AI-assisted sessions risks producing scientists who can articulate a field’s frontier without being able to navigate it. His resolution: the durable case for foundational expertise isn’t “AI can’t do this yet” but that science requires humans who can be genuinely accountable for claims, direct inquiry toward questions worth asking, and provide real transparency about their reasoning. See also:

Expertise Before Augmentation

Arjun Krishnan (lab, Bluesky), is a biomedical informatics researcher and co-director of PhD training programs at the University of Colorado Anschutz, has published a pair of complementary pieces that articulate something I’ve been thinking about for a while but

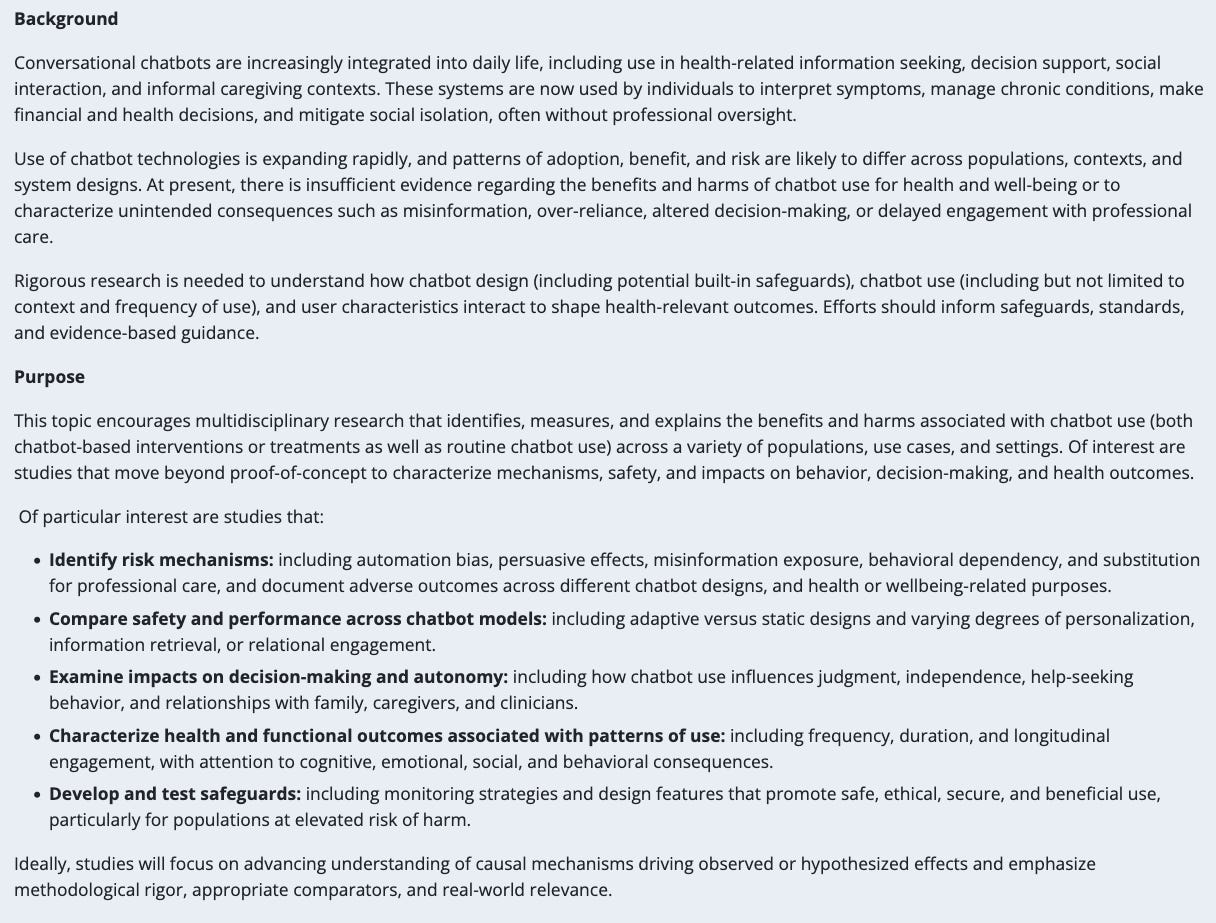

NIH Highlighted Topic: Research on Chatbots and their Usage. NIH posted a new Highlighted Topic (not a NOFO, but a signal of where institutes want to see investigator-initiated applications) on the benefits and harms of chatbot use in health contexts. The scope is broad: automation bias, behavioral dependency, substitution for professional care, effects on decision-making and autonomy, and safeguards for at-risk populations. Lots of participating ICOs. If you’re doing any research on LLM-based tools and health outcomes, this is NIH telling you there’s a welcome mat out. Apply through a PA; topic expires April 2027.

Randy Au: Code review for data (and non-SWE) folks. A practical, opinionated walkthrough of how Au approaches code review as a data person rather than a software engineer, with the core stance that your job is to help the code get better, not gatekeeping. He also makes a good observation about how LLMs have changed the review process: they’re useful for translating unfamiliar code into plain language so you can engage at the architectural level, but they still can’t substitute for a human who understands what the team is actually trying to accomplish.

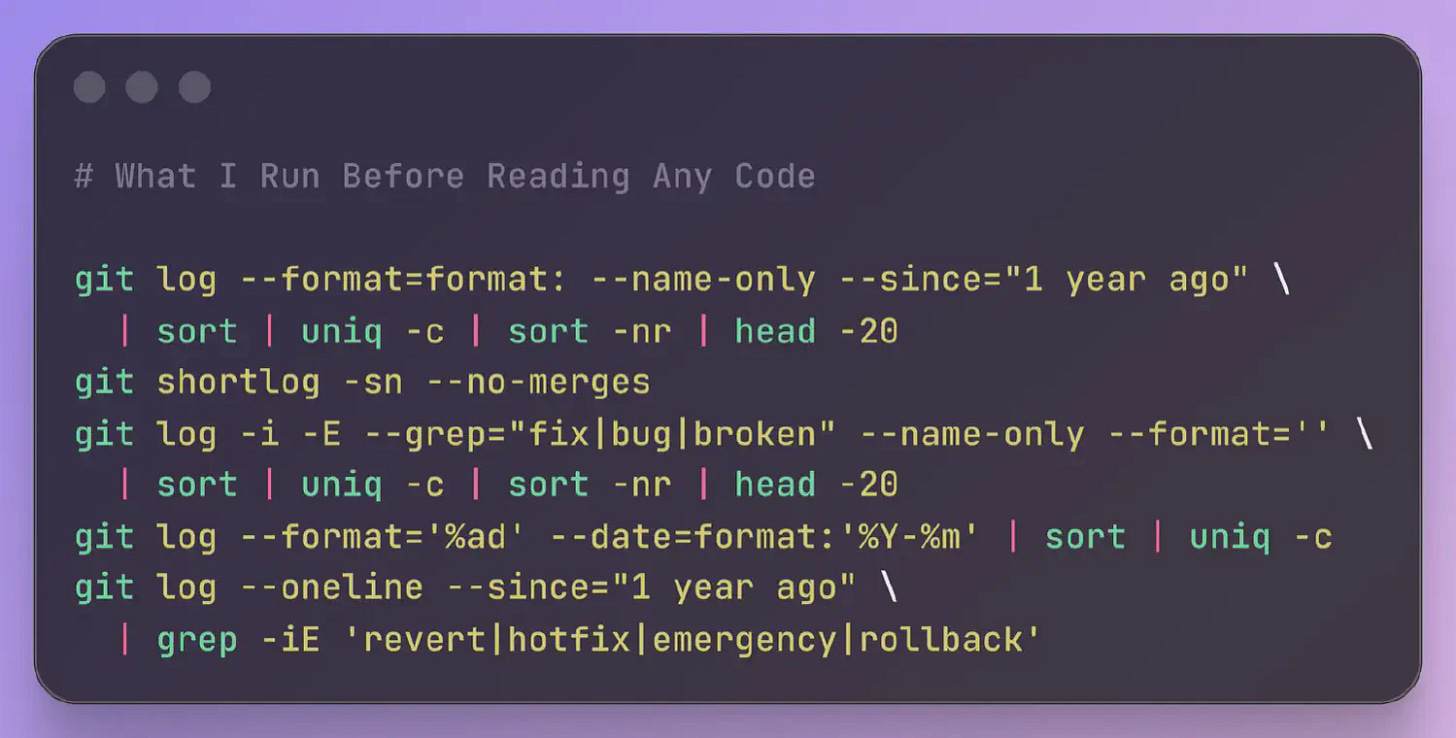

Ally Piechowski: The Git Commands I Run Before Reading Any Code. 5 git one-liners that give you a diagnostic picture of a codebase before you open a single file: churn hotspots, bus factor, bug clusters, commit velocity, and revert frequency. Pairs nicely with the Randy Au code review piece above, since both are about figuring out what’s actually going on in someone else’s code before you start forming opinions.

Separately, the New Yorker and the New York Times published very interesting long reads about Sam Altman and Satoshi Nakamoto. First, in the New Yorker, Ronan Farrow and Andrew Marantz: Sam Altman May Control Our Future. Can He Be Trusted?. Their investigation draws on interviews with more than 100 people in Altman’s orbit. The portrait that emerges is of someone with an unusual combination of interpersonal charm and indifference to the consequences of deception. Altman responded with a blog post acknowledging mistakes while framing the broader AI competition as a “ring of power” dynamic that distorts everyone’s behavior.

Writing in the New York Times, John Carreyrou: Who Is Satoshi Nakamoto? My Quest to Unmask Bitcoin’s Creator. Carreyrou broke the Theranos story spent over a year on this investigation and came away pointing at Adam Back, the British cryptographer who invented Hashcash (the proof-of-work system Bitcoin’s mining is built on). Back went quiet on cryptography mailing lists during Satoshi’s active years, resurfaced weeks after Satoshi vanished, and had written extensively about nearly every technical element of Bitcoin years before it launched. A stylometric analysis of nonstandard hyphenation patterns across hundreds of crypto mailing list authors matched Back. There was a really good hour-long episode of The Daily covering this one.

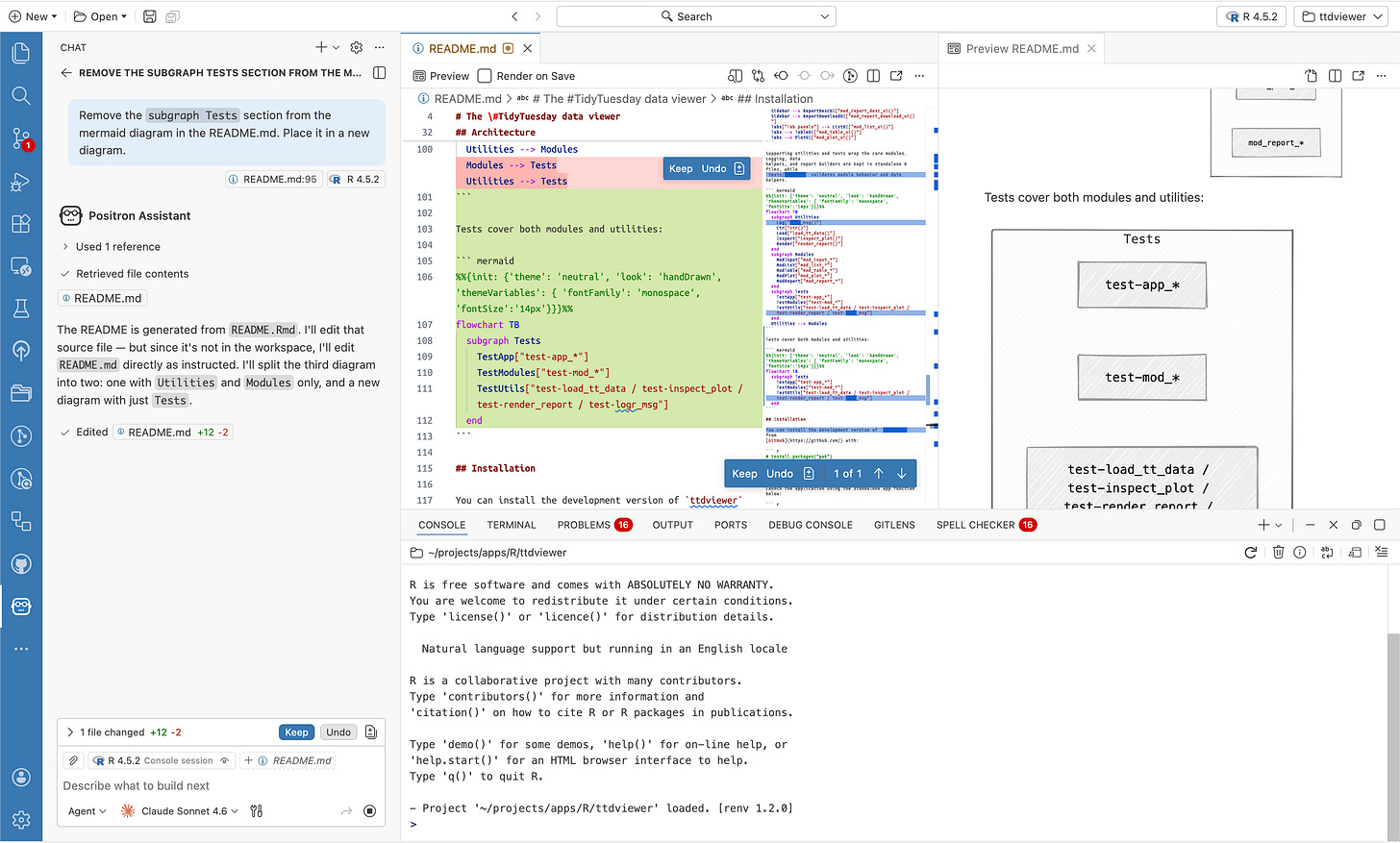

Comparing Posit Assistant and Claude Code: How does Posit Assistant differ from Claude Code? Sara Altman and Simon Couch demonstrate three ways Posit Assistant differs from Claude Code for data tasks: built-in R session access, easier data visualization workflow, and support for iterative data analysis.

scott cunningham: A professor's use case for AI generated papers. Cunningham bans AI from his stats and econometrics courses because he notes the 10-20 hour problem sets and the frustration of failing are where learning actually happens. But he found one use case he's comfortable with: he had Claude Code write three complete example papers (descriptive, predictive, causal inference) to illustrate what each genre looks like as a finished manuscript, because writing those himself would have been enormously time-intensive and he's not confident he'd do the descriptive and predictive genres well. Scott uses AI constantly in his own work but won't assign it to students, and he's honest that the line he's drawing is pragmatic, not principled.

Martin Frigaard: Who is this for? A reflection on how IDE-integrated LLM assistants are changing the way Martin thinks about problems, not just how he solves them. Complementary cognitive artifacts (maps, the abacus) transfer understanding you can retain after the tool is gone, while competitive ones (calculators, GPS, LLMs) leave you no better than when you started. Martin finds he actually prefers working in his restricted environment without IDE assistants, where communicating with an LLM looks more like composing a letter to a pen pal than approving autocomplete suggestions.

The Promise of AI and AI Agents: Another installment from Ryan Wright at AI Exchange @ UVA Substack. This video discusses When AI can act and not just advise: who should be in control, and where does the value actually land?

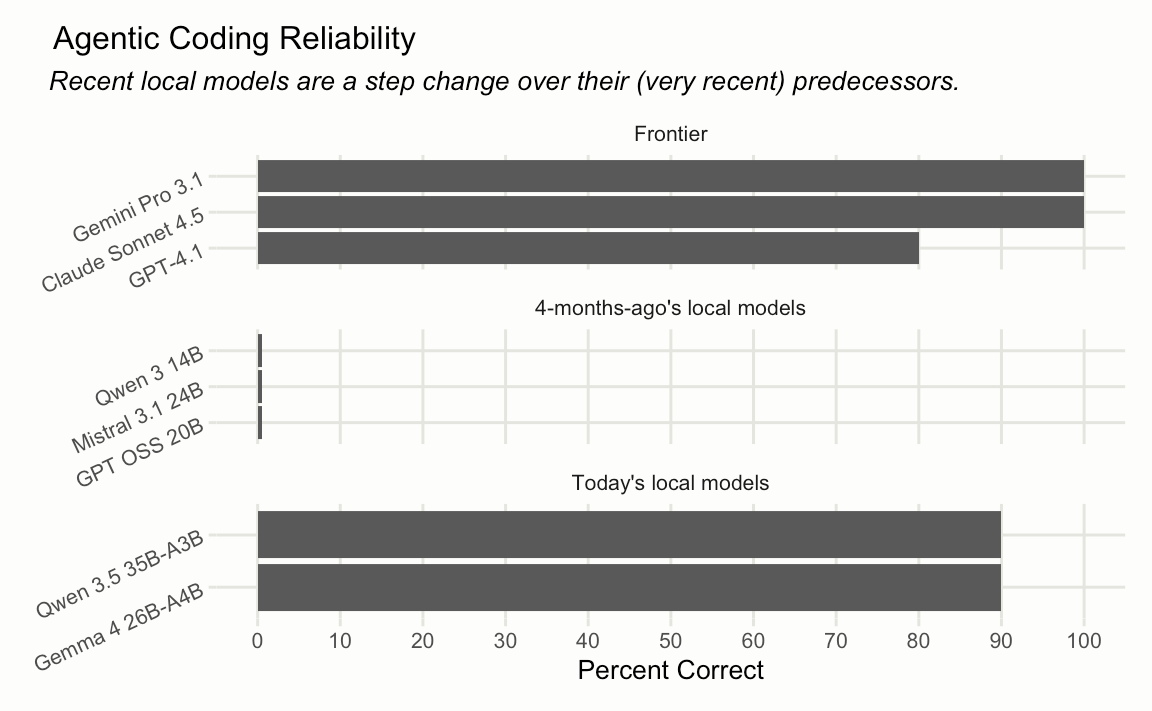

Simon Couch: LLMs running on my laptop can drive coding agents now. A follow-up to Couch’s December post where no local model could complete even a simple refactoring eval. Four months later, Qwen 3.5 and Gemma 4 both score 9/10, matching frontier models on the same benchmark. Neither is close to Opus 4.6 as a general coding partner, but both run at ~53 tok/s on a 48GB M4 Pro, which is surprisingly close to Sonnet 4.6’s API throughput, and that’s enough to keep you unblocked on a flight with miserable WiFi.

New papers & preprints:

Fast and accurate multiple-protein-sequence alignment at scale with FAMSA2

BioClaw: Human-Bot Research Collaboration Ecosystems in Group Chats

SkillClaw: Let Skills Evolve Collectively with Agentic Evolver

PaperOrchestra: A Multi-Agent Framework for Automated AI Research Paper Writing

Accelerated long-read variant calling with Clair3 for whole-genome sequencing