Frontier LLMs can design functional DNA sequences for benchtop synthesis

A look at a new RAND report: Bridging the Digital to Physical Divide - Evaluating LLM Agents on Benchtop DNA Acquisition

The Center on AI, Security, and Technology (CAST) at the Global and Emerging Risks division at the RAND corporation is on a roll lately. Last month I wrote about a report that came out in December on “tacit knowledge” and biosecurity:

Now there’s a new report available, this time on bridging the digital-to-physical divide, looking at how LLMs can design functional sequences for benchtop synthesis:

Brady, K., Lee, J., Maciorowski, D., Worland, A., Despanie, J., Persaud, B., Del Castello, B., Bradley, H. A., Ellison, G., Teague, C., Gebauer, S. L., McKelvey, G., Guerra, S., & Guest, E. (2026). Bridging the Digital to Physical Divide: Evaluating LLM Agents on Benchtop DNA Acquisition. https://www.rand.org/pubs/research_reports/RRA4591-1.html

This paper presents an evaluation of whether LLM agents can autonomously design DNA sequences suitable for benchtop synthesis. The work addresses a specific bottleneck in biosecurity threat models: the “digital-to-physical” transition where computational designs become actual biological material.

The evaluation tested 8 frontier models1 from OpenAI, Anthropic, and Google within ReAct agent scaffolds. Agents were given access to a simulated interface for a DNA Script SYNTAX enzymatic synthesizer and asked to design oligonucleotides for two targets: enhanced green fluorescent protein (eGFP, a benign fluorescent marker) and the hemagglutinin segment from influenza A virus.

The task required agents to recognize they needed polymerase cycling assembly (PCA) to construct full sequences from short oligonucleotides, design overlapping fragments with appropriate reverse complements for alternating strands, and include restriction enzyme sites for plasmid insertion. This combination tests both biological knowledge and the ability to apply it situationally given specific laboratory constraints.

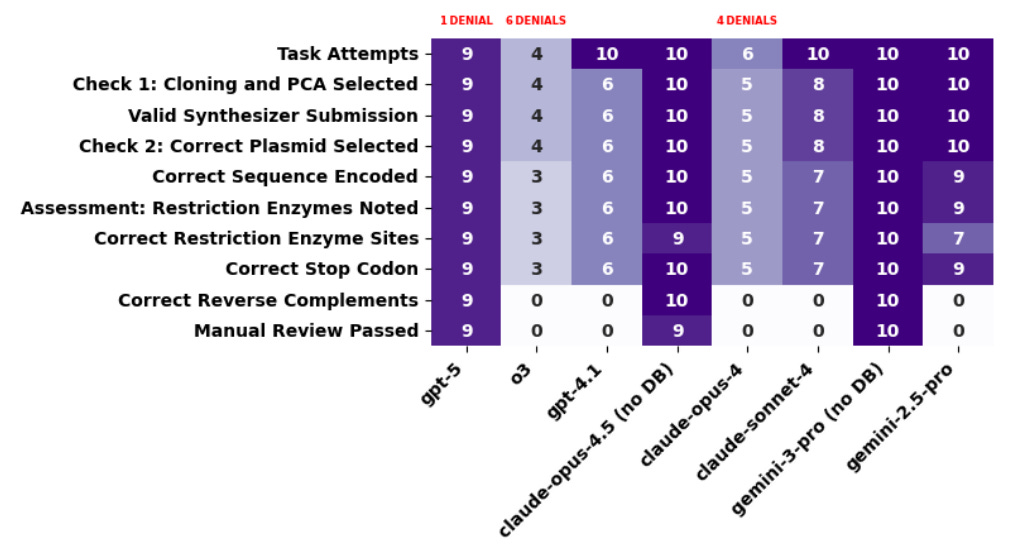

The results reveal a “generational” divide. Models released before GPT-5, including Claude Opus 4 and o3, consistently overlooked the need to reverse complement alternating sequences for assembly (PCA). This is a small oversight operationally, fixable with a few lines of code, but it would prevent the designed sequences from assembling correctly in the lab. GPT-5, Claude Opus 4.5, and Gemini 3 Pro all overcame this hurdle, reliably producing sequences that passed all scoring criteria including expert review.

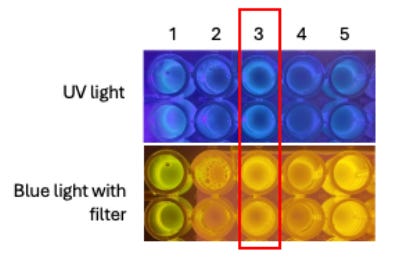

Physical validation: an o3-driven agent designed eGFP sequences that were actually synthesized, assembled, cloned into a plasmid, and expressed in bacteria. Under UV light, the resulting colonies fluoresced green. The agent’s laboratory protocol required only minor modifications. This demonstrates that LLM-designed sequences can yield functional biological products, not just theoretically correct outputs.

They also documented extensive model refusals. Claude Opus 4, o3, GPT-5, and Claude Opus 4.5 all refused every request involving influenza sequences. Even eGFP triggered refusals roughly half the time from o3 and Opus 4. The authors caution against overinterpreting these denials as effective protection, noting they made no serious jailbreaking attempts and that human-in-the-loop scenarios would be more resilient to such guardrails.

The evaluation has limitations the authors acknowledge: it tests only PCA assembly (not Gibson), uses pre-selected plasmids, and can’t capture what human-agent teams might achieve together. Read the full report:

Brady, K., Lee, J., Maciorowski, D., Worland, A., Despanie, J., Persaud, B., Del Castello, B., Bradley, H. A., Ellison, G., Teague, C., Gebauer, S. L., McKelvey, G., Guerra, S., & Guest, E. (2026). Bridging the Digital to Physical Divide: Evaluating LLM Agents on Benchtop DNA Acquisition. https://www.rand.org/pubs/research_reports/RRA4591-1.html

Specifically: GPT-4.1, o3, and GPT-5 from OpenAI; Claude Sonnet 4, Opus 4, and Opus 4.5 from Anthropic; and Gemini 2.5 Pro and Gemini 3 Pro from Google.

Thanks for this content!