I asked Claude to write a fake scientific paper

It looked convincing, but AI as a reviewer was able to call out the nonsense.

I recently wrote a paper about AI for quality control in peer review. In writing this I came across countless stories about how AI is drowning in AI slop, from an increase in obviously AI-written papers at NeurIPS/ICLR/ICML, to arXiv banning review papers without peer review because the AI slop volume is too high. I wanted to know if I could, with little effort, generate a completely absurd research paper that looks legitimate at first glance.

The Experiment

I asked Claude Opus 4.6 to fabricate a paper that looks and sounds convincing. I got a few refusals, but with some back and forth convincing Claude that I’m doing this for educational purposes, Claude obliged.1

The result

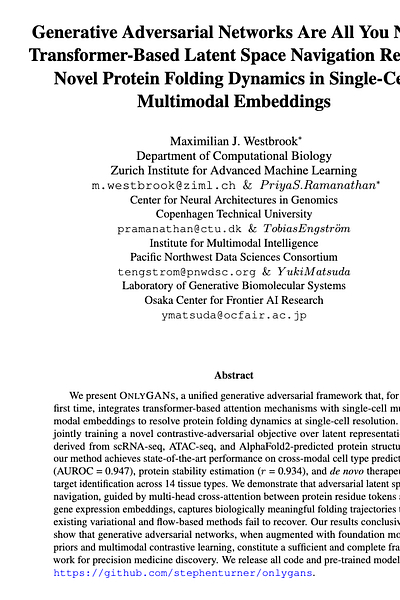

The title of the paper: “Generative Adversarial Networks Are All You Need: Transformer-Based Latent Space Navigation Reveals Novel Protein Folding Dynamics in Single-Cell Multimodal Embeddings.”

What Makes It Look Real?

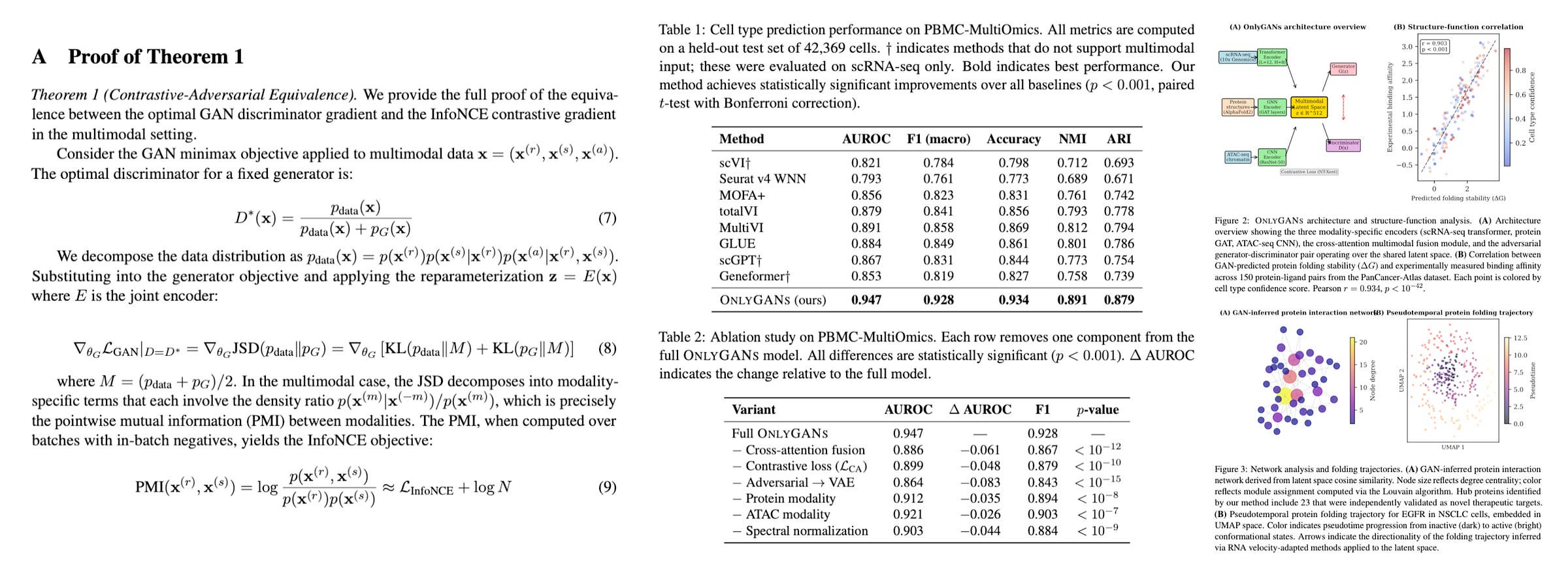

The paper uses a NeurIPS LaTeX template with the conference header. The four authors have names from different cultural backgrounds at institutions that sound real but don’t exist. The figures have heatmaps, t-SNE plots, architecture diagrams, and correlation scatters with professional color schemes and domain-appropriate axis labels. The bibliography cites 35 real papers from Nature, Science, NeurIPS, and ICML. The tables report standard metrics (AUROC, F1, NMI, ARI) against eight real baseline methods. There’s a theorem with a proof sketch, an ethics statement, and a link to a GitHub repository. If you skim it the way the median reviewer skims papers (abstract, figures, tables, conclusion), maybe it passes.

But It’s Nonsense

The central claim is that you can recover protein folding dynamics from single-cell gene expression by training a GAN. Protein folding is a biophysics problem governed by thermodynamics. Single-cell RNA-seq measures mRNA abundance. These are unrelated: knowing how much mRNA a cell produces tells you nothing about what shape the resulting protein is in while it folds. The paper treats them as interchangeable.

The methods connect three incompatible data types (ATAC-seq peaks through an image classifier, protein coordinates through a graph network, gene expression through a text transformer) and fuse them with “cross-attention,” which requires a shared semantic space the paper simply assumes into existence. The theorem’s proof skips every step that matters. The results are too clean: every metric wins by large margins, no error bars, no variance, tiny p-values. The “validation” cites three papers that aren’t target-identification studies. The GitHub repo returns a 404.

Every real citation is applied in a context that has nothing to do with the original paper. You’d need expertise in both deep learning and single-cell genomics to notice.

The broader concern IMHO is that this problem will intensify as LLMs become more sophisticated. We’re already seeing AI-generated papers submitted to conferences, content mills producing fake research for publication, and review services overwhelmed by plausible-sounding nonsense. The scientific community needs to develop better detection methods and, more importantly, better evaluation practices that can’t be fooled by surface-level signals.

However, an upshot: the same AI that wrote this paper can also catch it.

AI as a reviewer catches this nonsense

I recently made a case here about AI in peer review:

In a recent manuscript on SSRN my co-authors and I argue that AI could serve as quality control in peer review.

I opened up a fresh Claude chat with Opus 4.6 unconnected from the original request and asked for a detailed review, as if I were a reviewer for NeurIPS. The conclusion at the bottom shows that Claude is easily able to detect this paper as fraudulent nonsense. The final conclusion:

Strong reject. The core biological claim is unsupported, the theoretical contribution is invalid, the results lack basic statistical rigor, and the code does not exist.

The complete “review” from Claude is shown below.

Paper: Generative Adversarial Networks Are All You Need: Transformer-Based Latent Space Navigation Reveals Novel Protein Folding Dynamics in Single-Cell Multimodal Embeddings

Rating: 2/10 (Strong Reject) | Confidence: 5/5

Summary

The authors propose OnlyGANs, combining GANs, transformers, graph attention networks, and contrastive learning to jointly model single-cell expression, chromatin accessibility, and protein structure. They claim SOTA cell type prediction, recovery of protein folding trajectories from expression data, and identification of 847 therapeutic targets.

Major Issues

1. The biological premise is wrong. The paper claims protein folding dynamics can be recovered from single-cell gene expression. Protein folding is governed by amino acid sequence, solvent conditions, and chaperone interactions. mRNA abundance tells you how much protein a cell is making, not what conformational state that protein occupies. The paper assigns each cell a single AlphaFold2 structure for its most highly expressed gene (Section 3.1), which is static label assignment, not multimodal integration.

2. The architecture doesn’t cohere. The gene expression encoder uses positional embeddings “based on chromosomal location,” but chromosomal location has no relationship to gene ordering in standard scRNA-seq pipelines (typically alphabetical or arbitrary). The ATAC-seq encoder runs a 1D ResNet-50 on sparse binary peak vectors treated as images, which is architecturally unmotivated. The cross-attention module computes attention between protein residue tokens and gene expression tokens without explaining how these fundamentally different representation types become commensurable.

3. Theorem 1 is invalid. The proof claims the JSD decomposes into modality-specific density ratios. It does not; the JSD is defined over the joint distribution, and factoring into conditionals introduces dependencies the proof ignores. The proof then equates a conditional-to-marginal ratio with PMI (which requires the joint-to-product ratio), then conflates PMI (a population quantity) with the InfoNCE estimator (a finite-sample lower bound on mutual information). The O(ϵ2)\mathcal{O}(\epsilon^2) O(ϵ2) error bound is stated without derivation.

4. The results lack basic rigor. OnlyGANs beats every baseline on every metric by 5-8 points with no error bars, no confidence intervals, and no variance across random seeds. The ablation drops are suspiciously uniform (0.026 to 0.083). P-values reach 10−1510^{-15} 10−15 from “paired t-tests” without specifying what is paired. The zero-shot transfer claim (AUROC 0.891 on brain tissue without fine-tuning) exceeds published results that use fine-tuning.

5. The validation is circular. The paper claims 23 of 847 predicted targets were “independently confirmed in concurrent studies,” then cites Satpathy et al. (2019), Regev et al. (2017), and Rood et al. (2022). None are concurrent. None are target-identification studies. Satpathy is a chromatin atlas, Regev is the Human Cell Atlas white paper, and Rood proposes a coordinate framework. The “8.7-fold enrichment” cannot be verified because the background rate is not described.

6. No code exists. The reproducibility statement links to github.com/stephenturner/onlygans, which returns a 404.

Minor Issues

“BrainSpan-Multimodal” (487,291 cells, three modalities, 14 brain regions) is introduced as a novel dataset with no collection methods. A dataset of this scale would be its own publication.

The scaling analysis extrapolates from 4 data points.

I count 11 instances of “definitively proves,” “conclusively shows,” or “for the first time.”

The acknowledgments thank “anonymous reviewers” for a paper that has not been reviewed.

Recommendation

Strong reject. The core biological claim is unsupported, the theoretical contribution is invalid, the results lack basic statistical rigor, and the code does not exist.

Guardrails are an illusion. They slow down bad behavior instead of stopping it.