Weekly Recap (April 2, 2026)

AI automating AI research, AI + being PI, DL course, Neion Bio, NIH highlighted topics, TIP, Python type checking in Positron, R updates, biosecurity, NIH forecast graveyard, serendipity, new papers.

Hello, friends. This recap comes a day early because I’ll be leaving tomorrow for a long overdue holiday in France. No updates next week. Au revoir mes amis. 🇫🇷🧀🍷

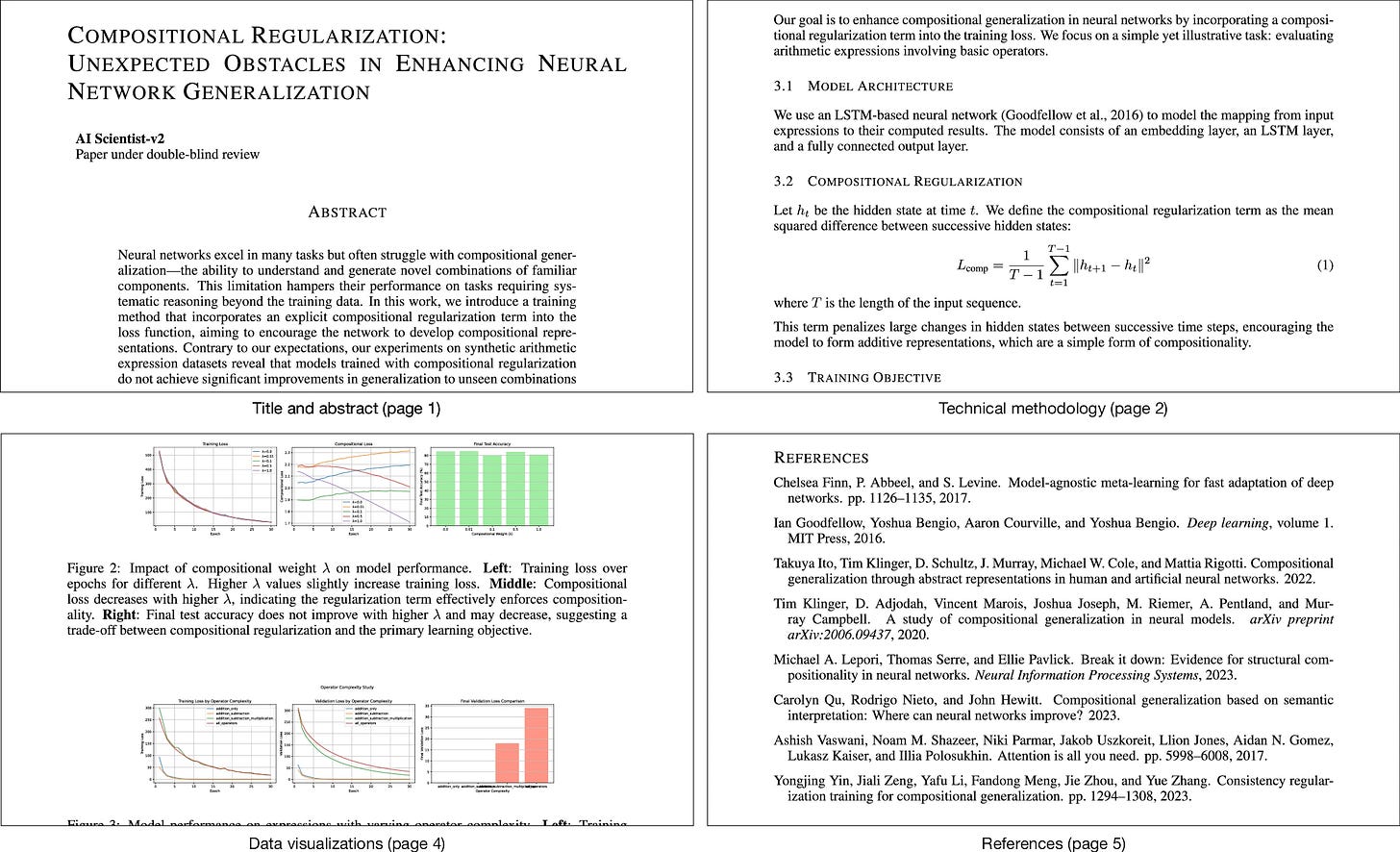

Chris Lu, et al., in Nature: Towards end-to-end automation of AI research. Sakana AI’s “AI Scientist” pipeline handles the full ML research loop: ideation, literature search, experiment design and execution, paper writing, and automated peer review. One of its manuscripts scored above the acceptance threshold at an ICLR 2025 workshop (which had a 70% acceptance rate, to be fair). Paper quality as judged by their automated reviewer tracks closely with foundation model capability, and with compute budget per paper, which tells you where this is headed even if the current output isn’t threatening anyone’s tenure case. For a quicker summary, read Sakana’s blog post.

Counterpoint: Steven Salzberg writes AI badly needs a dose of skepticism. Salzberg goes after DNA foundation models, arguing that their central claim (predict the effects of any mutation from sequence alone) is biologically implausible and largely unfalsifiable, two properties he knows well from years of writing about pseudoscience nonsense (homeopathy, accupuncture). Teams build ever-larger models first, then go looking for problems, which is backwards. The core critique of unfalsifiable prediction claims and Nature’s eagerness to publish them is hard to dismiss. See above.

Arjun Raj: Transitioning to Being a PI in the Age of AI. A short and honest post about the asymmetry in how faculty and trainees experience the current AI moment in computational biology. Faculty are exhilarated because they’ve spent years developing the skill of evaluating analyses without doing them line by line; trainees are more ambivalent because they’re being asked to make that same transition in months rather than years or decades.

My SDS colleague Heman Shakeri released full materials for his Deep Learning Course here at UVA. A complete, openly licensed (CC BY 4.0) deep learning course from UVA’s School of Data Science, built for the online MSDS program (DS 6050) and public since Fall 2025. The 12-module sequence starts with NumPy-first implementations of MLPs and backpropagation, moves through CNNs, RNNs, encoder-decoder architectures, and the full attention/transformer stack, and finishes with ViTs, LoRA/QLoRA, and generative models including diffusion. Each module has lecture videos, notes, slides, and Colab assignments with unit tests. The syllabus lays out the pedagogical logic: three phases moving from from-scratch understanding to architectural depth to modern practice. Too much deep learning education lives in disconnected repos and YouTube playlists; having everything in one structured, reusable site with a clear arc is more valuable than any single component on its own.

Carl Zimmer, NYT: How to Turn a Chicken Egg Into a Drug Factory. Neion Bio, a startup that emerged from stealth this week, is engineering chickens whose eggs produce pharmaceutical proteins, potentially replacing the Chinese hamster ovary (CHO) cell lines that currently dominate biologic drug manufacturing. The company claims 3,900 hens could meet global demand for Humira at a fraction of the cost of a CHO facility (Merck just broke ground on a $1B Keytruda plant, for comparison). Sven Bocklandt, Neion's chief scientific officer, was a colleague of mine at Colossal, where we worked on the dire wolf program together. Zimmer's writeup (great as usual) discussesthe history of how CHO cells became the default and why advances in primordial germ cell manipulation are finally making avian biomanufacturing viable.

New NIH Highlighted Topic: Advancing “Science of Science” Research to Understand and Strengthen the Biomedical Research Ecosystem. These are not NOFOs, but descriptions of scientific areas that NIH ICOs are interested in funding through existing parent announcements. This one encourages investigator-initiated applications on the “science of science,” the study of how the biomedical research ecosystem itself works. Topics include workforce retention, research capacity building, rigor and reproducibility, translation bottlenecks, and the economic returns of research investment.

Yet another new NIH Highlighted Topic: BRAIN Initiative: Advancing Human Neuroscience and Precision Molecular Therapies for Transformative Treatments. This one covers the BRAIN Initiative’s priorities in human neural circuit research, clinical neurotechnology, and precision molecular therapies (optogenetics, chemogenetics). 11 ICOs are listed as participating.

More NIH news: NOT-OD-26-064: Update of NIH Late Application Submission Policy and End of Continuous Submission. NIH is ending its Continuous Submission policy, which let PIs serving on review panels submit applications outside normal deadlines. Effective for due dates on or after May 25, 2026.

TIP Leadership Update. NSF's Erwin Gianchandani announces the retirement of Gracie Narcho, who served as deputy assistant director and directorate head for the Technology, Innovation and Partnerships directorate since its founding. Gianchandani credits Narcho with co-authoring the vision that became TIP before it had authorizing legislation, and with launching programs like the NSF Regional Innovation Engines and the I-Corps Hubs during a career spanning three decades and multiple NSF directorates.

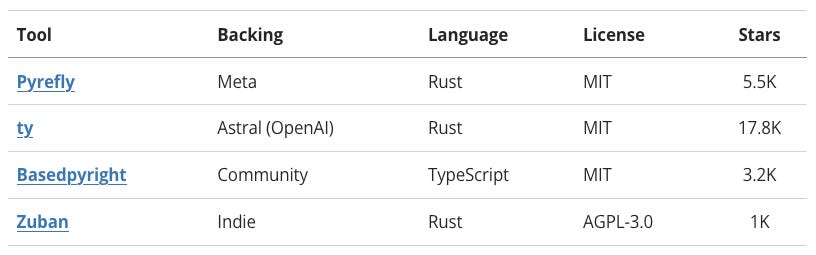

Austin Dickey: How we chose Positron's Python type checker. Posit evaluated 4 open-source Python language servers (Pyrefly, ty, Basedpyright, Zuban) across features, correctness, performance, and ecosystem health, then chose Meta's Pyrefly as Positron's default. The most interesting section is the comparison of type-checking philosophies: ty follows a "gradual guarantee" where removing a type annotation never introduces an error, while Pyrefly infers types aggressively even in untyped code. Good overview of a space that's moving fast.

Mario Zechner: Thoughts on slowing the f*ck down. A year into production use of coding agents, Zechner argues that the compounding of small errors at machine speed, combined with agents’ inability to learn from mistakes and their low-recall search over large codebases, is producing unmaintainable messes far faster than human teams ever could. The prescription: treat agents as task-level tools with humans as the quality gate, write your architecture by hand, and set deliberate limits on how much generated code you accept per day.

Theo Roe: Why Learning R is a Good Career Move in 2026. A short, beginner-oriented pitch from Jumping Rivers (an R training company, so calibrate accordingly) making the case for R as a first language for data work. Nothing new for experienced practitioners, but a reasonable overview of where R still has a strong foothold: healthcare, pharma, government, academic research, and anywhere visualization and reproducible reporting are central. The honest caveat at the end is useful: if you want software engineering or large-scale production systems, you probably need Python.

Matt Lubin at Bio-Security Stack: Five Things: March 29, 2026: Anthropic temporary win, scheming, biodesign by LLM, White House advisors, Anthropic security.

Ryan Layer: What do I teach now?. Ryan has taught Software Engineering for Scientists at CU Boulder since 2019, and coding agents have forced him to rethink the whole course. In science, code is the method, so vibe coding is a reproducibility problem in addition to being a quality problem. He’s now rebuilding the class around open questions like who audits AI-generated analyses in ten years if no one learns to build from scratch.

The thought of my students building software by prompting and accepting the output without reading the code keeps me up at night. […] For science, where the code is the method, vibe coding is not an option.

Claus Wilke at Genes, Minds, Machines: Creating reproducible data analysis pipelines. A case against the “run everything from raw data” ideal of reproducibility. Claus argues that intermediate CSV files saved right before plotting are more durable than any end-to-end pipeline: pipelines break, Docker images rot,1 and students (and PIs!) lose afternoons rerunning everything to swap a violin plot for a boxplot.

rOpenSci News Digest, March 2026: dev guide, champions program, software review and usage of AI tools.

Joe Rickert: February 2026 Top 40 New CRAN Packages: AI, machine learning, biology, medical applications, physics, Buddhism, statistics, climate science, computational methods, data, surveys, ecology, time series, epidemiology, utilities, genomics, and visualization.

R Weekly 2026-W14: ggauto, alt text, scientific coffee.

Max Kuhn: tabpfn 0.1.0. An R interface (via reticulate) to TabPFN, a pre-trained deep learning model for tabular prediction from PriorLabs (I wrote a this short summary of TabPFN last year). The model was trained entirely on synthetic data generated from complex graph models simulating correlation structures, skewness, missing data, interactions, and more. No fitting happens on your data; your training set primes an attention mechanism via in-context learning.

Elizabeth Ginexi: Inside the NIH Forecast Graveyard. An accounting of NIH funding opportunities that were announced on grants.gov and then never published. Of 336 open forecasts, 205 have passed their promised posting dates with no explanation. The first wave of cancellations in April 2025 was keyword-driven (DEI, HIV, health disparities), but the later waves and the larger mass of silently expiring forecasts hit basic science, clinical infrastructure, and congressionally mandated programs like the BRAIN Initiative and Gabriella Miller Kids First. Ginexi, a former NIH insider, makes the dataset available for anyone to check.

Niko McCarty: Many Great Inventions Weren’t Made by “Serendipity”. Niko uses optogenetics as the central case for a broader argument: the breakthroughs we narrate as lucky accidents were usually preceded by years of deliberate preparation and systematic enumeration of possible solutions.

New papers & preprints:

Toward next-generation machine learning and deep learning for spatial omics

High-resolution metagenome assembly for modern long reads with myloasm

The Age Illusion — Limitations of Chronologic Age in Medicine

Accelerating coral assisted evolution to keep pace with climate change

SNP calling, haplotype phasing and allele-specific analysis with long RNA-seq reads

State AIDS Drug Assistance Programs’ Contribution to the US Viral Suppression, 2015–2022

Paper on this topic coming soon. Stay tuned.