Five Things: May 7, 2026

BioMysteryBench, the Mythos vetting U-turn, USC’s $200M AI bet, Yihui Xie on AI coding, NIH Highlighted Topics

I’ve been writing about what’s interesting to me in a “Weekly Recap” series of posts every Friday. I’ll keep playing with this format, but for now I’m trying the “Five Things” format I’m stealing from Matt Lubin at Bio-Security Stack, going deeper on fewer topics.

The White House walking back its hands-off posture and floating a UK-style pre-release review for frontier models, with Anthropic’s Mythos as the proximate cause. There’s also some bioinformatics-specific benchmarking that Anthropic is doing, a $200M university gift that says something about where universities think they fit in AI, and a good essay from Yihui Xie (of knitr and “down” packages fame) on what AI-assisted coding feels like from the inside. Matt’s Five things from Monday covered a lot of last week’s biosecurity ground, so I’ll try not to retread too much here.

The White House decides it might want a say in what Anthropic ships

BioMysteryBench, and what “human-difficult” actually means

USC takes $200M to “infuse” AI across the university

Yihui Xie on vibe-coding in a language he doesn’t know

New NIH Highlighted Topics

1. The White House decides it might want a say in what Anthropic ships

The New York Times reports that the Trump administration is now considering an executive order to set up a working group on pre-release review of frontier AI models, possibly modeled on the UK’s process. White House officials reportedly briefed Anthropic, Google, and OpenAI on the plans last week. The framing in the piece is that this reverses the administration’s earlier “let the baby thrive” posture, which in practice meant rolling back the Biden-era reporting requirements for models with potential military applications.

The proximate trigger, per the reporting, was Anthropic’s Mythos announcement. Anthropic said the model was capable enough at finding software vulnerabilities that releasing it could trigger a cybersecurity “reckoning,” and declined to ship it broadly. Some officials are now pushing for a system that gives the government first look at new models without blocking release. Others, per the article, want to know whether next-generation models might offer cyber capabilities useful to the Pentagon and intelligence community. Both motives can coexist, and they probably do.

Pair this with the fact that Anthropic was simultaneously being blocked by the White House from expanding Mythos access to its corporate customers, and being sued by the DOJ over the Pentagon’s “supply chain risk” designation. One administration wants this specific company’s most capable model for itself, doesn’t want it going to other people, was last month trying to wall the company off from defense contractors entirely, and is now also thinking about a formal review regime for everyone. Generously, that’s policy moving in real time and different parts of the executive branch wanting different things. Less generously, “voluntary” doesn’t mean voluntary when one buyer can decide who else gets access.

Bipartisan unease is a rare resource and it tends to attract regulators, which I suspect is more of what’s actually driving the working group than anyone wants to say on the record. The obvious incentive problem with a UK-style review run as an executive-branch working group with no statutory backing: it gives whoever’s in office discretion over which models reach which customers, and “national security” is a flexible category. It would be great (wouldn’t it?) if this codified by Congress, with the criteria written down. Neither seems imminent.

2. BioMysteryBench, and what “human-difficult” actually means

Anthropic released BioMysteryBench, 99 bioinformatics questions written by domain experts, where Claude is dropped into a container with the usual tools (pip, conda, NCBI, Ensembl) and asked to figure things out. Anyone who’s reviewed a bioinformatics manuscript knows that two competent analysts handed the same dataset will produce different (sometimes contradictory) conclusions, and asking a model to mimic any single analyst’s path is its own kind of overfitting. Grounding answers in objective properties of the data (what organism is this crystal structure from, what gene was knocked out, who’s the parent of sample X) sidesteps that.

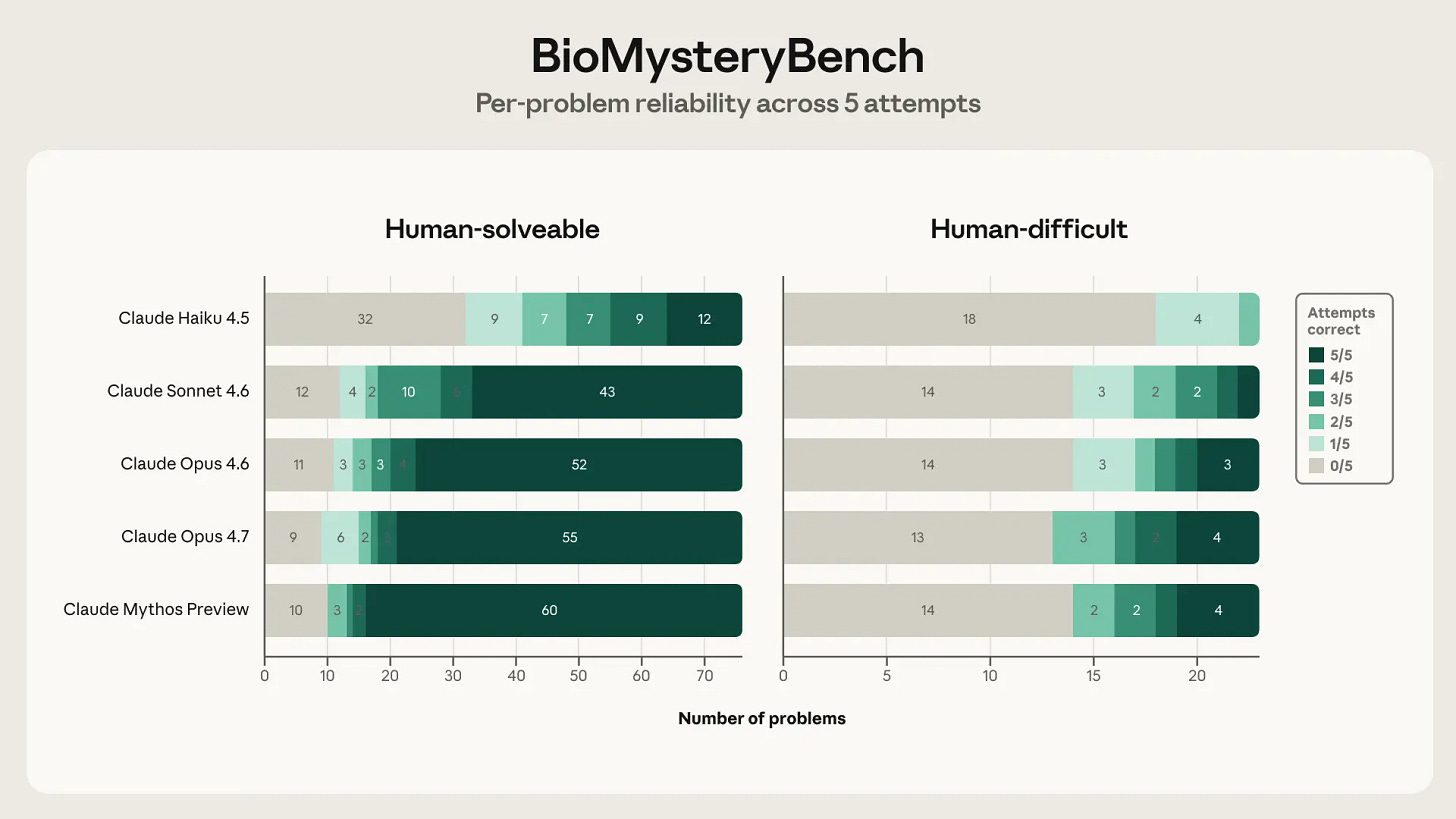

The headline result: Claude Mythos Preview solves about 30% of the human-difficult set, where “human-difficult” means a panel of up to five domain experts collectively could not solve it. That’s the number that will get quoted. The number I think actually matters is buried further down, in the analysis Mythos itself wrote about reliability:

On the human-difficult set... a much larger fraction of each model’s correct answers come from problems it solves only once or twice in five tries.

The headline accuracy gap between the easy and hard sets understates what’s actually happening: on hard problems, the model is often stumbling onto a reasoning path it can’t reliably reproduce. This matters a lot for anyone who would actually deploy these things in a research workflow, where “right once out of five tries” is closer to a nuisance than a capability.

A few caveats. The human-difficult set is small (23 questions after QC), so I’d be careful about reading too much from any single model’s number on it. The benchmark was developed at Anthropic and tests Anthropic models, but Genentech/Roche‘s independently developed CompBioBench showed similar results, which I’d consider meaningful external validation. The qualitative analysis of strategies, that Claude sometimes pattern-matches across pretraining knowledge in ways human experts can’t, and sometimes runs multiple methods and triangulates, lines up with how I’ve seen it behave in my own bioinformatics work. The “knowing when you don’t know and trying three approaches” behavior is useful, and it’s also one of the more expensive things the model does in terms of tokens, so I’m curious whether it sticks around as inference costs come under pressure.

The benchmark itself is available on Hugging Face.

3. USC takes $200M to “infuse” AI across the university

The NYT reports that USC is taking a $200 million gift from Mark and Mary Stevens (Mr. Stevens being a Sequoia Capital partner, Nvidia board member, and USC alumnus) to integrate AI across academic disciplines. Most of the money is earmarked for new faculty hires in areas like health care, cybersecurity, and (this being LA) the arts, with some going to compute. The university is renaming its computing school for Stevens and converting it into a school of AI, on top of a planned bachelor’s in AI launching later this year.

USC’s president, Beong-Soo Kim, was admirably honest about the strategic logic. Universities can’t outspend the private sector on frontier model development, so they should focus on places where they can add distinctive value, meaning practical applications across domains.

The bottleneck for universities trying to do AI well is faculty (not buildings or compute), and faculty hiring at this scale runs into the same wall every time: the people you want already have offers from OpenAI or Anthropic at multiples of academic salary. Stevens acknowledged this in the article (”the jockeying for top AI thinkers could be costly”), and Kim said hiring would take about a year. I’d take the over on that timeline. The notion of “applying AI across disciplines” is right in the sense that it’s where the marginal value is, and it’s also a cop-out in the sense that “applied AI in [field]” is what every department and every search committee is going to say from now until the heat death of the academic job market. The schools that do something distinctive will be the ones that can answer specifically what they mean by it. USC has not yet, though to be fair the gift is two days old.

The comments1 on the article have a very different sentiment than the press release.

4. Yihui Xie on vibe-coding in a language he doesn’t know

Yihui Xie (creator of knitr, bookdown, blogdown, and roughly half the R Markdown ecosystem the rest of us depend on) wrote a reflection on AI-assisted programming. Yihui resisted Cursor for a long time, then finally tried GitHub Copilot on a project in Rust (which he doesn’t know) to build an R package called tinyimg. It worked. He shipped a Rust-based R package in days without ever cloning the repo to his local machine.

The essay captures the emotional cost of working with a coworker who doesn’t sleep. Yihui notes that AI is “really, really, really good at the boring routine work” (yes), but…

Whenever I think of this knowledgeable always-on co-worker, I feel both excited and exhausted.

The model is tireless, the user is not, and the temptation to assign tasks before bed and check in the morning is real. I’ve written about this:

Yihui compares it to addiction, somewhat unironically, with a riff about how the first three words babies learn are “No, Mine, More.” The other prediction is what he calls “software proliferation,” a near future where centralized development becomes history and everyone has their own personalized formatter, linter, IDE, language, and distro. He’s mostly right, with the caveat that personalized software has the same problem as personalized RAG pipelines: maintenance debt accumulates, and the person paying it is you. The model can write the thing. It cannot care about the thing six months from now when an upstream dependency breaks.

5. NIH Highlighted Topics

NIH’s highlighted topics are not NOFOs. They’re scope statements: the listed Institutes, Centers, and Offices are saying they’d consider competitive an investigator-initiated app submitted through a parent announcement that fits the topic. Here are a few recent ones that caught my attention.

On the methods-and-tools side, three topics overlap. The chatbot research topic is asking for empirical work on the benefits, harms, and safeguards of chatbot use in health contexts, with explicit language about automation bias, behavioral dependency, substitution for professional care, and benchmarking across models. The companion topic on scientific rigor, transparency, and replicability explicitly invites AI-driven tools for assessing whether rigor practices were performed, and AI-driven tools for data harmonization and metadata generation. And the computational modeling of complex biological processes topic is the long-running NIH interest in multiscale biology. Bundle these and what you have is NIH telling computational and data science people: yes, we want the methods work, we want the AI evaluation work, and we want the rigor-engineering work.

On the workforce-and-ecosystem side, three more. Quantum information science for biomedical applications reads as a hedge against the possibility that quantum sensing or quantum-classical hybrid algorithms hit useful regimes for imaging, biomarker detection, or biomolecular simulation in the next few cycles. Nobody knows whether quantum has a near-term biomedical payoff, and NIH is putting up a small umbrella in case it does. Training and career development in dissemination and implementation science is a workforce signal: NIH wants more T32-style and K-mechanism training in D&I methods, which has been a steady drumbeat from the agency for years and is still apparently underfunded relative to the demand. And the “science of science” topic asks for research on the biomedical research ecosystem itself: workforce dynamics, peer review, team science, translation bottlenecks, and the economic returns to research investment.

Highlighted topics route through parent announcements, so the application mechanics are the standard ones. The expiration dates are one year from posting, so don’t sit on these. And the participating ICOs vary by topic, so read the specific page and contact the listed PO before drafting.

Grab bag

New ARPA-H program: Intelligent Generator of Research: an AI-enabled system that identifies knowledge gaps and designs optimal experiments based on these models and wires these up to a cloud lab.

Anthropic strikes a deal with SpaceX for compute, doubling Claude Code rate limits, and removing peak hours limit reductions.

Mike Koeris: On Biological Data Generation: More Is Different, and So Is the Data

Papers, etc:

CellVoyager: AI CompBio agent generates new insights by autonomously analyzing biological data

SecureMaxx: A Lightweight Sequence Screening Tool for Agents

Toward life with a 19–amino acid alphabet through generative artificial intelligence design

A transparent universal credit system to incentivize peer review

Hash functions in nucleotide sequence analysis | Genome Research

A few “Reader picks” comments:

Almost all peer review published evidence of A.I. in education shows lower critical thinking, resiliency, and problem solving skills when students use A.I. even for short periods of time.

I see University of Southern California wishes to totally remove any intellectual or creative legitimacy it might still have.

Wanting to “integrate” AI into the arts? Soulless, morally bankrupt university. What a shame.

USC administrators clearly don’t care that faculty are in charge of the curriculum. What do USC faculty have to say about this?