De-slop the text you shouldn't be writing anyway

A Claude skill to remove all the AI patterns from AI-generated text. Useful for the bureaucratic writing you don't want to write anyway.

I judiciously use AI to help with writing and editing.1 I never let AI speak for me.2 And I typically don’t use AI for first drafts —3 this is where the thinking happens, where you actually have to think about how prior art fits into your current work, how data supports an argument, and where the gaps are.4

That’s my typical red line. However, some writing exists purely as bureaucratic packaging, and I’d rather spend zero minutes on it.

Cover letters for journal submissions are the obvious case IMHO. There’s really no reason for these at all. You state the title, summarize the contribution in two sentences, confirm it’s not under review elsewhere, and move on.5 Other entries in this category:6 data management plans, facilities and equipment descriptions (given the specs you already have on file), budget justifications given a budget spreadsheet and a research strategy (“The PI requests 1.2 calendar months of effort to provide scientific direction and oversight and blah blah blah...”). And so on.

For these tasks I do use AI to write my first draft and edit as needed. But default LLM output usually reads like default LLM output. The tells are well-documented at this point: filler transitions, “the ever-evolving landscape,” em dashes on every line, self-answered rhetorical questions, it’s not X it’s Y, let that sink in. Let an LLM write your cover letter unedited and the reviewer (if they’re human) can probably tell.

I built a Claude skill called deslop to strip these patterns out. It teaches Claude to identify and remove AI writing tropes across about 30 categories, scores output on a 50-point rubric (directness, rhythm, trust, authenticity, density), and includes before and after examples for scientific writing, blog posts, and grant narratives. The deslop GitHub repo has the full catalog, along with credit where it’s due (see the README).7

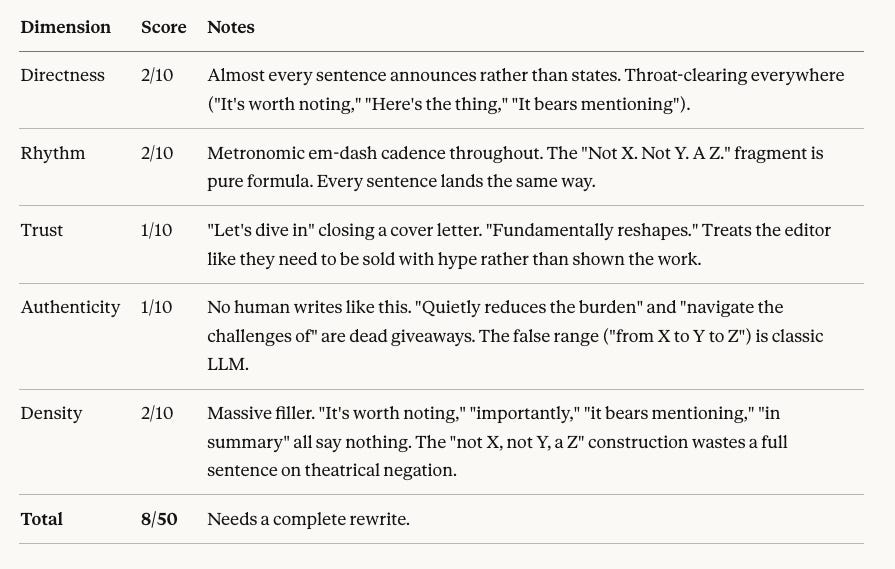

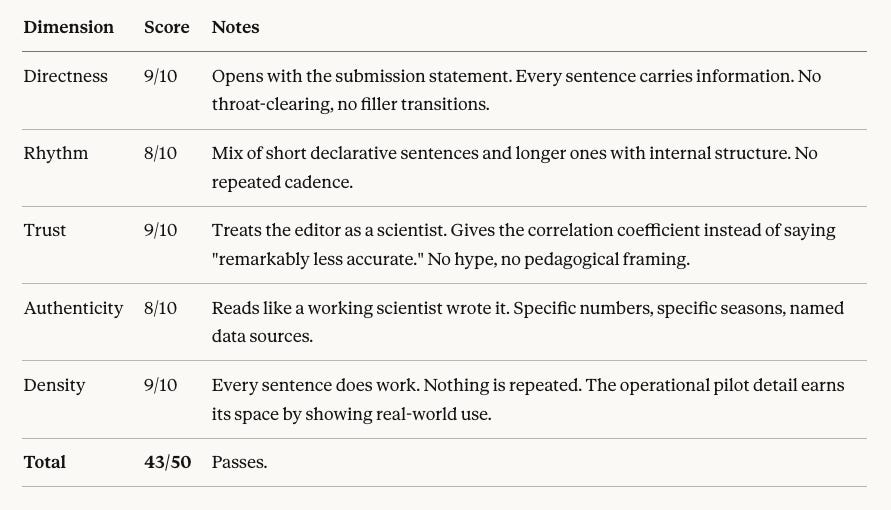

As a demo, I had Claude write a cover letter for a paper that I’ve already published (Nagraj et al. 2025, PLOS ONE, blog post here), as if I’m submitting it to PLOS ONE, with every AI trope cranked up. It scored 8/50. Then I ran the deslop skill on the same content. The rewrite scored 43/50. Specific numbers replaced vague claims, filler transitions disappeared, and it read like a person wrote it for a purpose rather than a model producing plausible cover-letter-shaped text.

The skill works on any chat on claude.ai, Claude Code, or the desktop app. Clone the repo, install the skill, ask it to deslop or write like a human, and the patterns get filtered at generation time instead of in post-hoc editing.

Here’s the before. Yes, it’s utterly ridiculous (yet reads like most X/LinkedIn posts these days).

It’s worth noting that methods for reviewing epidemiological signals serve as a critical component of the ever-evolving landscape of public health surveillance. Here’s the thing: implausible forecasts and faulty surveillance data don’t just bias ensemble models — they erode trust in the very systems designed to protect public health. We are pleased to submit our manuscript, “PLANES: Plausibility Analysis of Epidemiological Signals,” for consideration in PLOS ONE. In this paper, we delve into a novel, robust approach for assessing forecast plausibility and surveillance data integrity — one that is multi-dimensional, flexible, and open-source. The result? A scoring system that fundamentally reshapes how modelers and public health stakeholders navigate the challenges of data quality review. Our analysis of 2022-23 FluSight forecasts highlights that forecasts flagged by multiple PLANES components were remarkably less accurate on average, underscoring the approach’s practical utility for operational forecasting — from pre-submission quality control to ensemble weighting to surveillance system monitoring.

Despite these challenges in the current state of plausibility assessment, we believe PLANES fills an important gap. Not a replacement for human judgment. Not a fully automated system. A decision-support tool that quietly reduces the burden of manual review while leveraging historical data to codify nuanced expectations about signal behavior. It bears mentioning that the accompanying rplanes R package — available on CRAN and GitHub — makes the approach accessible to practitioners across federal, state, and local jurisdictions. Importantly, we have already piloted rplanes in operational settings, including the 2023-24 FluSight season, reflecting broader trends toward more standardized and reproducible forecasting workflows. In summary, we believe this work represents a meaningful contribution to the growing body of literature on epidemiological data integrity, and we look forward to the opportunity to share it with the PLOS ONE readership. Let’s dive in.

Here’s how it scored:

Here’s what the text looks like after running /deslop.

We submit “PLANES: Plausibility Analysis of Epidemiological Signals” for consideration in PLOS ONE. The manuscript describes a multi-dimensional scoring method for assessing the plausibility of infectious disease forecasts and surveillance data, delivered through an open-source R package (rplanes, available on CRAN). PLANES uses historical observed data to seed location-specific baseline characteristics, then evaluates signals across seven components, each producing a binary flag that rolls up into an ordinal score. In a retrospective analysis of over 29,000 FluSight forecasts from the 2022-23 season, we found that forecasts with two or more components flagged had significantly higher weighted interval scores (r = 0.267, p < 0.0001), indicating worse predictive accuracy.

No codified plausibility heuristics currently exist for epidemiological signals; review, where it happens at all, is ad hoc and undocumented. PLANES addresses this gap with a method that works for both forecasted and observed signals at daily, weekly, or monthly resolution. We piloted rplanes operationally during the 2023-24 FluSight season to review both our submitted forecasts and the NHSN surveillance data used to train our models. The package is designed for human-in-the-loop use: it flags signals for review rather than censoring them automatically. We believe this work will be of interest to PLOS ONE readers working in disease forecasting, surveillance data quality, and public health decision support.

And the score:

Get the skill here: https://github.com/stephenturner/skill-deslop.

A year or two ago if you used AI at all for writing or editing it was the social norm to hide the fact that you did. “I didn’t use AI at all to write this” was some kind of badge of honor. As I write this in March 2026, I think that sentiment is going about the same as resistance to spell check, calculators, and other useful tools.

I follow Simon Willison’s rule here: if text expresses opinions or has “I” pronouns attached to it then it’s written by me. I don’t let LLMs speak for me in this way.

The em dash here and elsewhere in this newsletter reflects my stylistic preference and should not be interpreted as evidence of AI-assisted text generation.

These are the parts of being a scientist that I don’t want to atrophy.

Honestly there’s no reason for cover letters for journal articles to exist at all, and they certainly should not be mandatory. The compliance checks could be a checkbox on the submission portal, and the contributions are already summarized in the abstract. I’d be happy to never write one of these again.

I’m not suggesting that no one should be writing these. These are just a few things that I don’t want to write.

This skill was built in part by combining and synthesizing material from two open sources: (1) AI writing tropes catalog from tropes.fyi by Ossama Hassanein. The references/tropes.md file is adapted from this source, and trope patterns are integrated throughout the other reference files; (2) stop-slop from github.com/hardikpandya/stop-slop by Hardik Pandya. The phrase lists, structural patterns, before/after examples, scoring rubric, and quick checks draw from this project.

OMG I spent this past week trying to create exactly this but I fell down the rabbit hole of testing out various LLM-detectors. Too bad I didn't think to look for these much simpler approaches by Hassanein and Pandya. If I had known you'd be publishing `deslop` I'd have saved probably hundreds of thousands of Claude tokens 😅

Not putting the Data Management Plans in this same bucket! 😅 (Said as a Data Management Librarian.) Although now the NIH-funded researchers will only have to check Yes or No for most of the DMP, so they won't even need Claude for that anymore.