Closing my tabs (Aug 29, 2025)

RAND corp AIxBio report, AI accelerating: GPT-4b for stem cell reprogramming; AI is slowing down; UVA SDS on Bluesky; LLMs and education; Python needs R's CRAN; learning R+Python together

Happy Friday, colleagues. August has flown by at warp speed. I started a new job, and my backlog of (semi-) pleasure reading grows longer. This is my regular attempt to close out my browser tabs I’ve accumulated over the past week with blog posts, podcasts, papers, etc. in AI, data science, genomics, public health, programming, scicomm, and other miscellany. Enjoy!

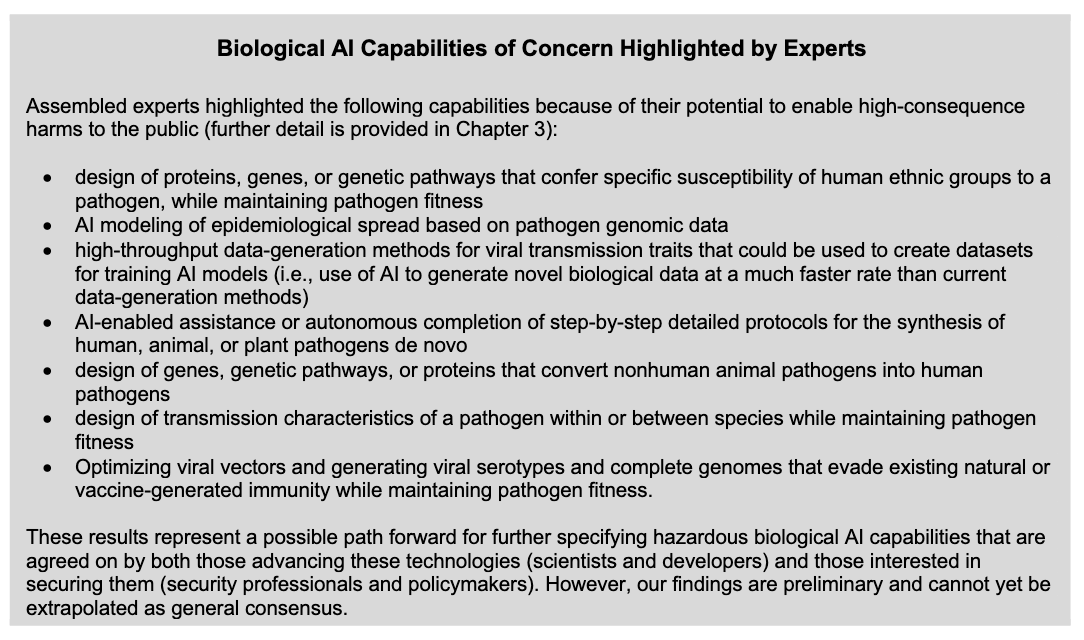

This RAND Corporation report, “Defining Hazardous Capabilities of Biological AI Models” was published earlier this week. From the overview:

On June 3, 2024, the Johns Hopkins Center for Health Security and RAND convened a group of scientists, AI-model developers, biosecurity experts, and policymakers to discuss the potentially hazardous capabilities of biological AI models—models that are trained on or capable of meaningfully manipulating substantial quantities of biological data. The interdisciplinary group clarified the definitions of concerning biological capabilities that would raise significant public health concerns, acknowledging that one of the capabilities that was identified as concerning already exists. Some capabilities have not yet been achieved but are under active development, while others may be years away or may never materialize. Accurately forecasting the direction of AI advances was not considered a prerequisite to initiating this discussion on security. Instead, the aim was to determine specific model capabilities that, if achieved, would pose a serious threat to global public health.

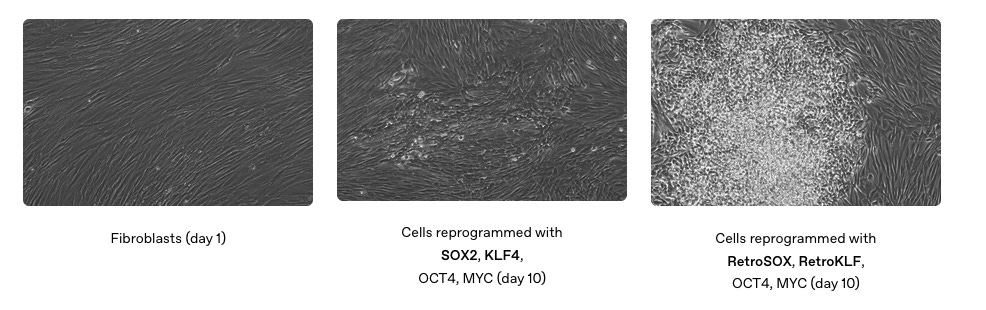

Accelerating life sciences research: OpenAI and Retro Biosciences achieve 50x increase in expressing stem cell reprogramming markers.

See also Alexander Titus’s essay on the announcement, “The Model That Reprograms Cell Identity.” Lots of free moonshot research proposal ideas in this one:

If AI can design better Yamanaka factors, what else can it do? The logical endpoint is tantalizing: generalized transcription factor cocktails capable of converting any cell type into any other. […] What if, instead of trial and error, we had a Rosetta Stone of cell identity, a computational map of transcription factor space that could be used to design cocktails for any conversion? The implications are staggering:

Medicine: damaged heart tissue after a heart attack could be rebuilt with cells reprogrammed directly in place.

Cancer: malignant cells might be pushed back into a normal state instead of being destroyed.

Aging: senescent cells could be rejuvenated at scale, restoring function across tissues.

Transplants: organ shortages could be addressed by building tissues from a patient’s own reprogrammed cells, eliminating rejection risk.

In short, a universal reprogramming toolkit could make biology as programmable as software.

Ezra Klein: “How ChatGPT Surprised Me” (gift link). This particular line about 2/3 into the column resonated with me: “But search is A.I.’s gateway drug. After you begin using it to find basic information, you begin relying on it for more complex queries and advice.” This rings true to me. I use AI all the time for helping with the drudgery of things I can easily verify (e.g., certain coding tasks), or relatively low-stakes tasks. But the ChatGPT window that’s always open on my computer and the app on my phone’s home screen are always begging me to use it for that next thing, and the temptation is hard to resist even when I know better.

Ezra’s column also led me to AI 2027. I haven’t read the whole thing yet but I’m looking forward to doing so.

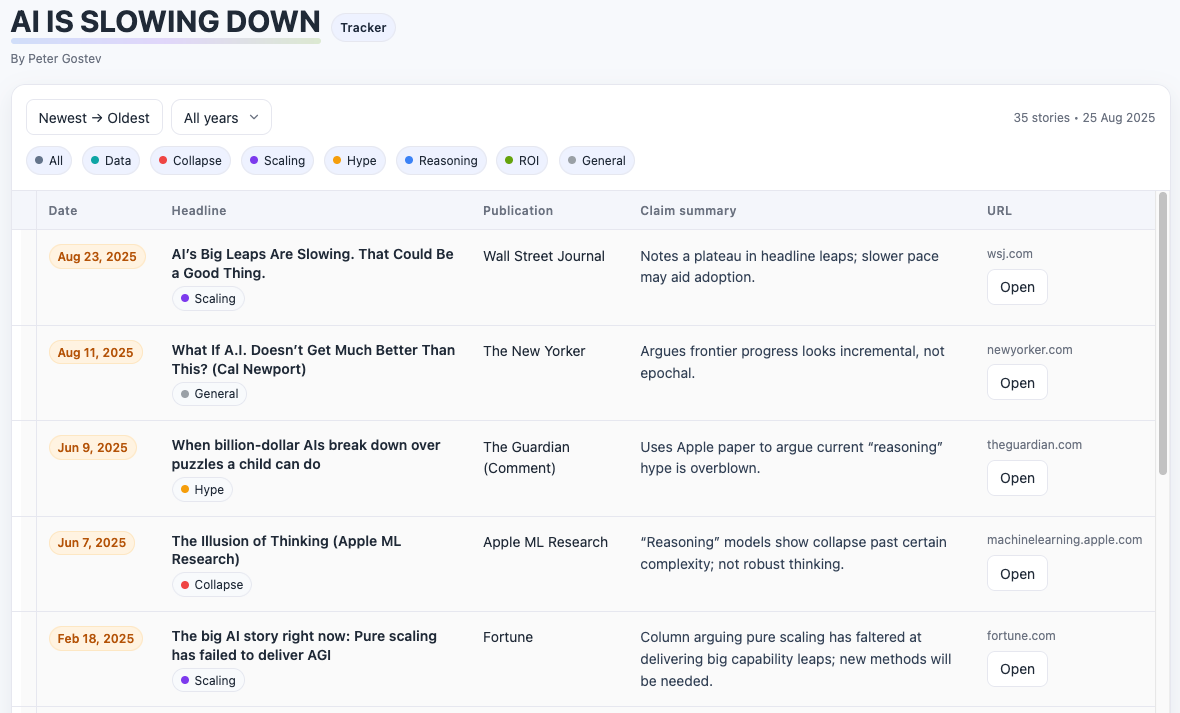

More on AI slowing down: John Cassidy published this piece in the New Yorker: The A.I.-Profits Drought and the Lessons of History. An M.I.T. study found that 95% of companies that had invested in A.I. tools were seeing zero return. It jibes with the emerging idea that generative A.I., in its current incarnation, simply isn’t all it’s been cracked up to be.

Related, I found it amusing that the AI is Slowing Down Tracker is an app running on Replit, a platform that helps you build web apps using AI.

And in news that should surprise literally no one: AI-generated scientific hypotheses lag human ones when put to the test.

The Only Real Solution to the AI College Cheating Crisis. TL;DR: oral examinations.

Bruno Rodrigues: Python needs its CRAN. I have my issues with CRAN, but at the end of the day when I install.packages(“somethingorother”) I know it’ll just work. Awesome package managers like uv and repositories like conda-forge don’t really solve this problem. Bruno calls for a curated layer on top of PyPI that enforces consistency, proposing PyPAN: the Python Package Archive Network. A little snippet from the post that everyone’s run into illustrates the core problem:

If you use Python to analyse data (I’m sorry for you) you’ve probably hit this issue: you install one package that requires

numpy<2, and another that requiresnumpy>=2. You’re cooked, as the youths say. The resolver can’t help you, because the requirements are literally incompatible. No one nor anything can help you. No amount of Rust-written package managers can help you. The problem is PyPI.

Nailong Zhang’s new book in progress: Another Book on Data Science for learning Python and R in parallel. Chapters available so far:

Introduction to R/Python Programming

More on R/Python Programming

data.table and pandas

Random Variables & Distributions

Linear Regression

Optimization in Practice

Machine Learning – A gentle introduction

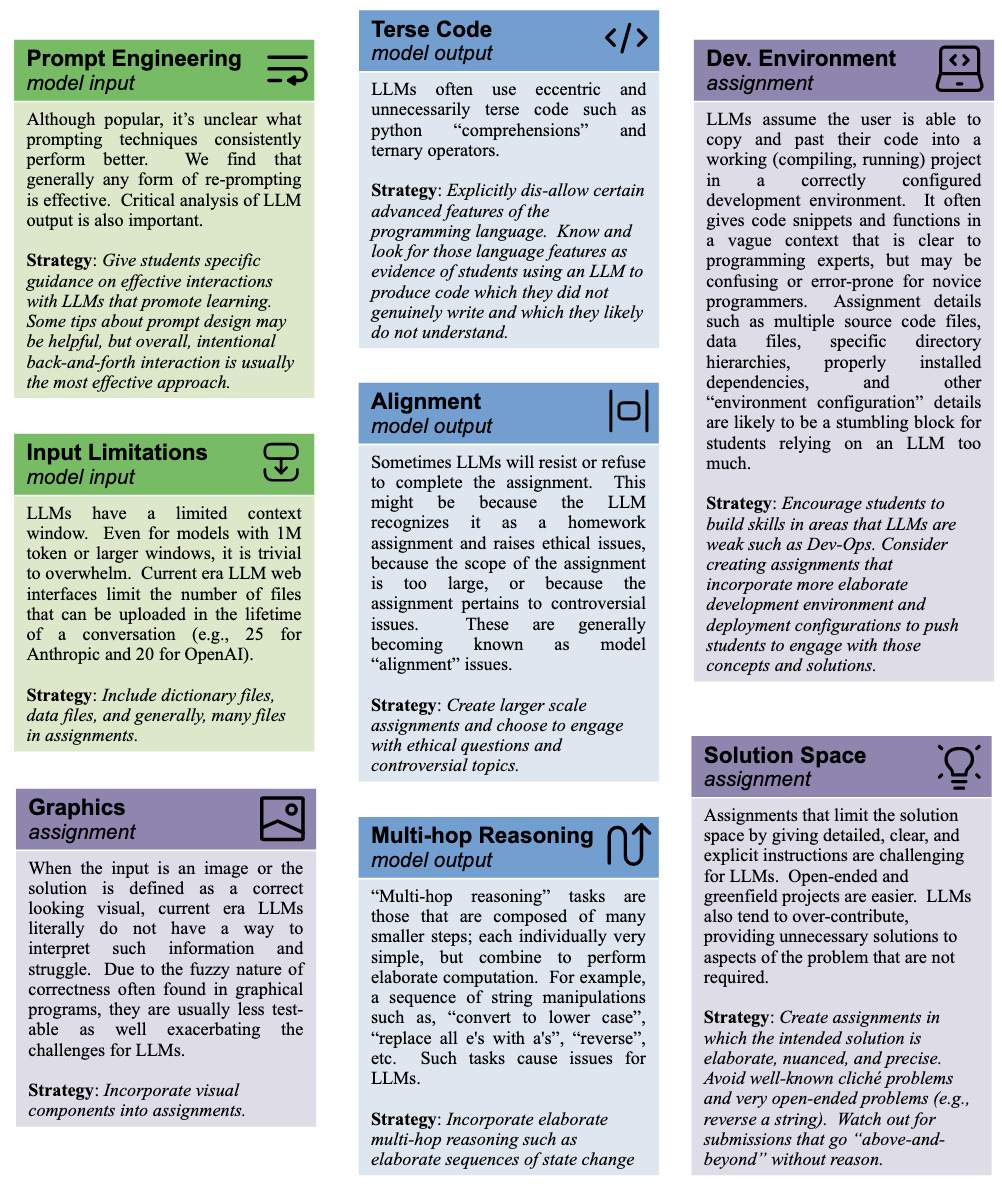

A conference paper from the ACM Technical Symposium on Computer Science Education (SIGCSETS): Designing LLM-Resistant Programming Assignments: Insights and Strategies for CS Educators. I recently attended an academic retreat here at UVA where the topic of education in the age of widespread AI use came up (an issue educators everywhere are surely dealing with). This conference paper provides a few insights, guidelines, and strategies based on their experiences using LLM to solve assignments.

The guest on this week’s episode of The Test Set podcast from Posit is Roger Peng on Sustaining data science in classrooms, code, and conversations. I listened to every episode of Roger and Hilary Parker’s podcast (Not So Standard Deviations) going all the way back to 2015. If you haven’t yet, the back catalog is still a great listen, especially the book club episodes on Design Thinking.

NYT: In Every Tree, a Trillion Tiny Lives: Scientists have found that a single tree can be home to a trillion microbial cells — an invisible ecosystem that is only beginning to be understood. The original paper this article covers was published earlier this month in Nature: A diverse and distinct microbiome inside living trees.

Ars Technica: Bluesky now platform of choice for science community. It's not just you. Survey says: "Twitter sucks now and all the cool kids are moving to Bluesky."

Related: my new academic home, the UVA School of Data Science is now on Bluesky, and my colleague here Alex Gates created the UVA SDS Starter Pack. Give these accounts a follow if you want to see who’s here and what we’re doing!

rOpenSci News Digest, August 2025: R-multiverse, links to rOpenSci talks at UseR 2025, rOpenSci at posit::conf(2025), new packages, tips for R package developers, more.

posit::glimpse() Newsletter for August 2025: posit::conf(2025), Positron, LLM tools (ellmer, ragnar, chatlas, vitals, mall), Quarto 1.8, package updates, learning resources, community updates.

R Weekly 2025-W35: Introducing g6R: a network widget for R; Introduction to Julia for R users, more.