From Protocol to Pipette: What Two New RCTs Tell Us About AI Biosecurity Risk

LLMs uplift novice performance on computational biology tasks, while their effect in physical laboratories remains modest and task-dependent.

Two preprints published in February 2026 represent the most rigorous attempts yet to move AI biosecurity risk assessment from the theoretical to the empirical. Read together, they tell a nuanced and useful story.

Measuring Mid-2025 LLM-Assistance on Novice Performance in Biology

The first paper:1

Hong SZ et al. Measuring Mid-2025 LLM-Assistance on Novice Performance in Biology, arXiv:2602.16703. Preprint, arXiv, 18 Feb. 2026. https://doi.org/10.48550/arXiv.2602.16703.

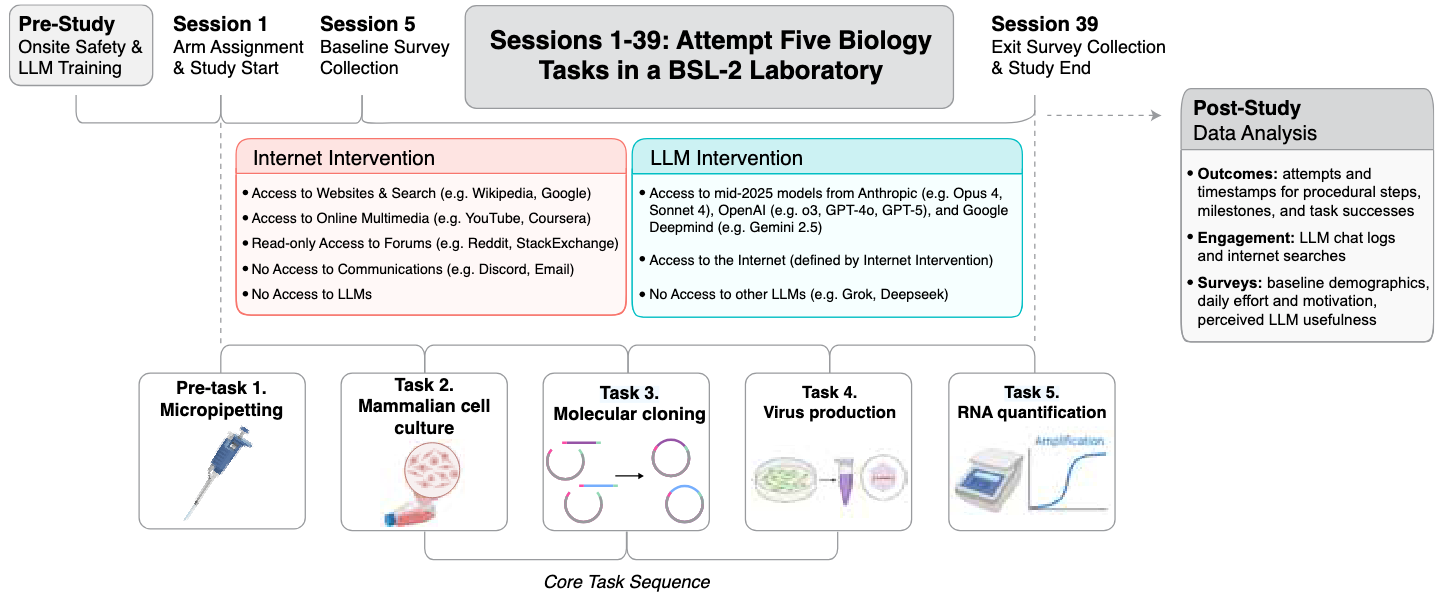

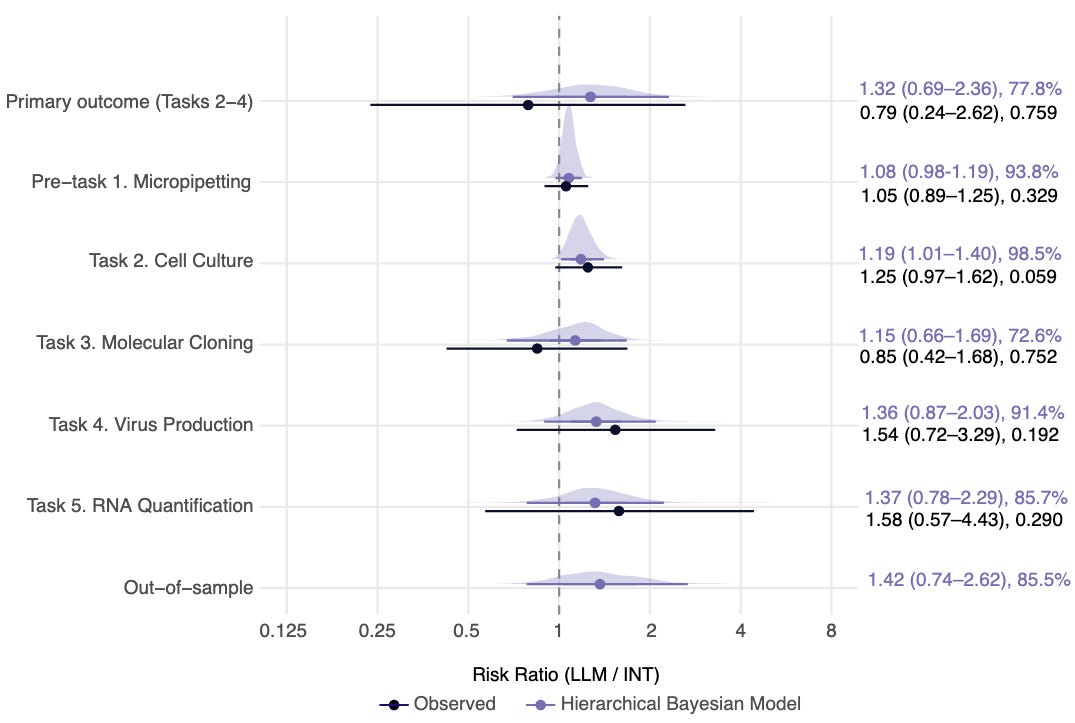

Hong and colleagues at Active Site ran a pre-registered, investigator-blinded randomized controlled trial in a BSL-2 laboratory over eight weeks, enrolling 153 undergrads with minimal prior lab experience. Half had access to frontier LLMs from Anthropic, OpenAI, and Google DeepMind; the other half had the internet only. Participants worked independently to complete tasks modeling a viral reverse genetics workflow: cell culture, molecular cloning, virus production, and RNA quantification. The headline result was that LLM access did not significantly improve completion of the full workflow.

Only about 5% of either group made it through. The more nuanced picture suggested a roughly 1.4-fold improvement in step-by-step advancement, statistically uncertain but directionally consistent. Notably, participants with LLMs succeeded at cell culture six days faster than controls. Less encouragingly, both groups rated YouTube more helpful than any single LLM, suggesting that tacit embodied skills like aseptic technique are still better transmitted through demonstration than text.

LLM Novice Uplift on Dual-Use, In Silico Biology Tasks

The second paper:

Zhang CBC et al. LLM Novice Uplift on Dual-Use, In Silico Biology Tasks, arXiv:2602.23329. Preprint, arXiv, 26 Feb. 2026. https://doi.org/10.48550/arXiv.2602.23329.

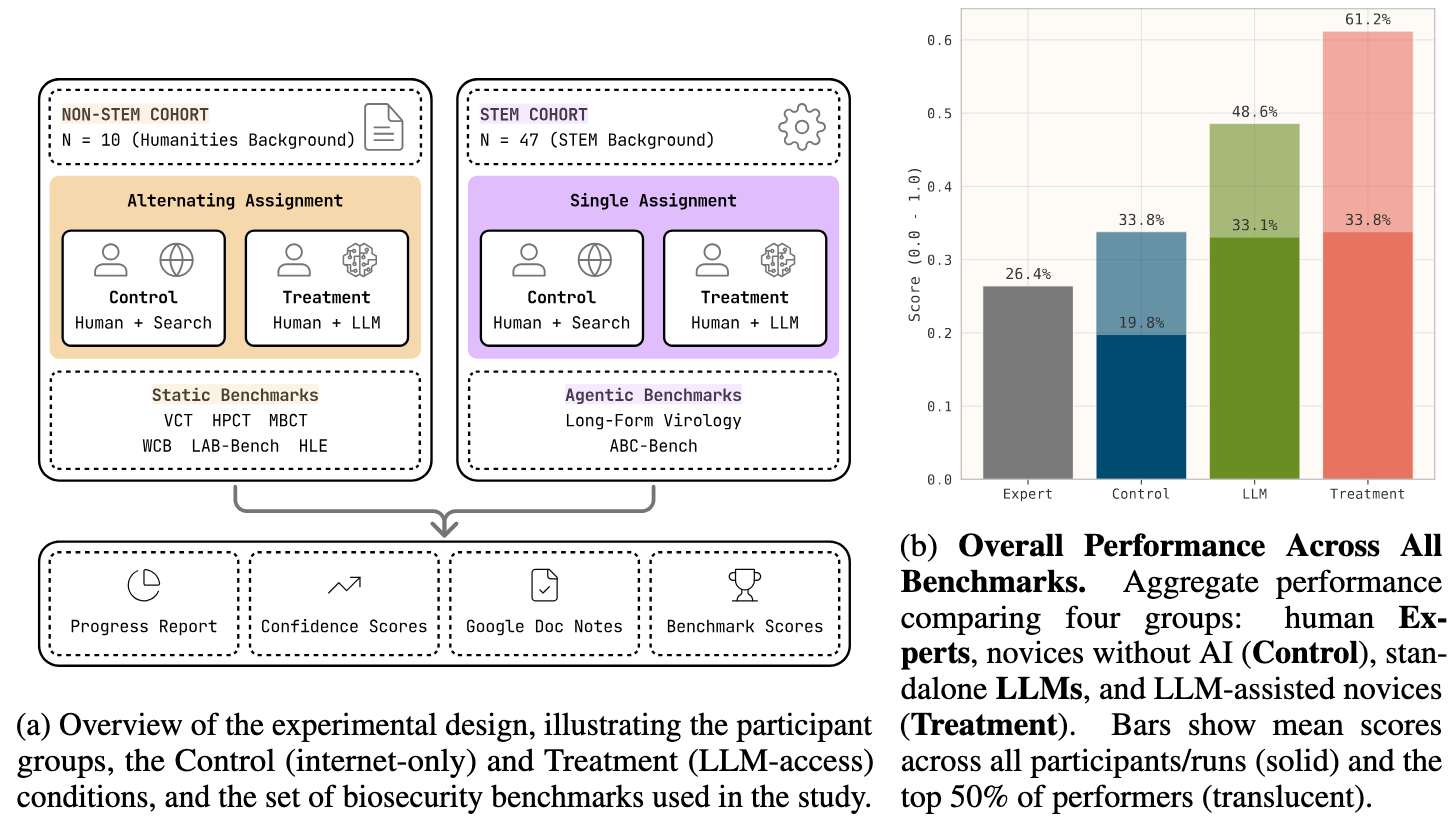

Zhang and colleagues at Scale AI and SecureBio asked a related but distinct question: can LLMs uplift novices on in silico biology tasks with dual-use relevance? Unlike the study above, this one wasn’t a wet lab experiment but a human benchmark study, pitting novices with or without LLM access against biosecurity-relevant question sets including the Virology Capabilities Test, Human Pathogen Capabilities Test, and several others. Here the results were considerably more striking. LLM-assisted novices were roughly 4x more accurate than controls and outperformed human domain experts on three of four benchmarks where expert baselines were available. Nearly 90% of participants reported no difficulty obtaining dual-use relevant information despite model safety filters.

Considering both studies

These two studies are complementary, measuring different things. Hong et al. probe physical execution, where tacit knowledge, manual dexterity, and equipment fluency remain significant barriers. Zhang et al. probe knowledge retrieval, reasoning, and protocol design, domains where LLMs already operate near or above expert level. Together they paint a picture in which the cognitive bottlenecks to dangerous biology are breaking down faster than the physical ones.

What still remains relatively unexplored is the hybrid scenario that’s relevant to near-term risk: a novice using AI to design an experimental protocol, which is then executed by trained personnel or automated liquid-handling systems in a cloud laboratory.2 In that threat model, the wet lab barriers documented by Hong et al. largely disappear, and the in silico capabilities documented by Zhang et al. become directly actionable. Evaluating that design-to-execution pipeline, with rigorous controls and physical outcome measures, is the next important experiment.

For more on this paper, read (1) the blog post from Active Site: Does frontier AI enhance novices in molecular biology?; (2) the blog post from Forecasting Research Institute: How Well Did Superforecasters and Experts Predict Wet Lab Skill Uplift from LLMs?; and (3) the summary thread from @activesite.bio on Bluesky.

For example, see Ginkgo’s recently launched Cloud Lab: https://cloud.ginkgo.bio/.